diff --git a/api/apps/conversation_app.py b/api/apps/conversation_app.py

index 0d392bccf..f4d327993 100644

--- a/api/apps/conversation_app.py

+++ b/api/apps/conversation_app.py

@@ -163,6 +163,7 @@ def completion():

del req["conversation_id"]

del req["messages"]

ans = chat(dia, msg, **req)

+ if not conv.reference: conv.reference = []

conv.reference.append(ans["reference"])

conv.message.append({"role": "assistant", "content": ans["answer"]})

ConversationService.update_by_id(conv.id, conv.to_dict())

diff --git a/api/apps/dialog_app.py b/api/apps/dialog_app.py

index cc6f9810a..fccc0ecbb 100644

--- a/api/apps/dialog_app.py

+++ b/api/apps/dialog_app.py

@@ -32,7 +32,6 @@ def set_dialog():

dialog_id = req.get("dialog_id")

name = req.get("name", "New Dialog")

description = req.get("description", "A helpful Dialog")

- language = req.get("language", "Chinese")

top_n = req.get("top_n", 6)

similarity_threshold = req.get("similarity_threshold", 0.1)

vector_similarity_weight = req.get("vector_similarity_weight", 0.3)

@@ -80,7 +79,6 @@ def set_dialog():

"name": name,

"kb_ids": req["kb_ids"],

"description": description,

- "language": language,

"llm_id": llm_id,

"llm_setting": llm_setting,

"prompt_config": prompt_config,

diff --git a/api/apps/document_app.py b/api/apps/document_app.py

index 0649adaaa..9ce7e2b11 100644

--- a/api/apps/document_app.py

+++ b/api/apps/document_app.py

@@ -272,7 +272,9 @@ def get(doc_id):

response = flask.make_response(MINIO.get(doc.kb_id, doc.location))

ext = re.search(r"\.([^.]+)$", doc.name)

if ext:

- response.headers.set('Content-Type', 'application/%s'%ext.group(1))

+ if doc.type == FileType.VISUAL.value:

+ response.headers.set('Content-Type', 'image/%s'%ext.group(1))

+ else: response.headers.set('Content-Type', 'application/%s'%ext.group(1))

return response

except Exception as e:

return server_error_response(e)

diff --git a/api/db/db_models.py b/api/db/db_models.py

index 49aa169cf..9b2dc7ffb 100644

--- a/api/db/db_models.py

+++ b/api/db/db_models.py

@@ -464,6 +464,7 @@ class Knowledgebase(DataBaseModel):

avatar = TextField(null=True, help_text="avatar base64 string")

tenant_id = CharField(max_length=32, null=False)

name = CharField(max_length=128, null=False, help_text="KB name", index=True)

+ language = CharField(max_length=32, null=True, default="Chinese", help_text="English|Chinese")

description = TextField(null=True, help_text="KB description")

embd_id = CharField(max_length=128, null=False, help_text="default embedding model ID")

permission = CharField(max_length=16, null=False, help_text="me|team", default="me")

diff --git a/api/db/services/llm_service.py b/api/db/services/llm_service.py

index 6bc1150d7..2a9647402 100644

--- a/api/db/services/llm_service.py

+++ b/api/db/services/llm_service.py

@@ -57,7 +57,7 @@ class TenantLLMService(CommonService):

@classmethod

@DB.connection_context()

- def model_instance(cls, tenant_id, llm_type, llm_name=None):

+ def model_instance(cls, tenant_id, llm_type, llm_name=None, lang="Chinese"):

e, tenant = TenantService.get_by_id(tenant_id)

if not e:

raise LookupError("Tenant not found")

@@ -87,7 +87,7 @@ class TenantLLMService(CommonService):

if model_config["llm_factory"] not in CvModel:

return

return CvModel[model_config["llm_factory"]](

- model_config["api_key"], model_config["llm_name"])

+ model_config["api_key"], model_config["llm_name"], lang)

if llm_type == LLMType.CHAT.value:

if model_config["llm_factory"] not in ChatModel:

@@ -120,11 +120,11 @@ class TenantLLMService(CommonService):

class LLMBundle(object):

- def __init__(self, tenant_id, llm_type, llm_name=None):

+ def __init__(self, tenant_id, llm_type, llm_name=None, lang="Chinese"):

self.tenant_id = tenant_id

self.llm_type = llm_type

self.llm_name = llm_name

- self.mdl = TenantLLMService.model_instance(tenant_id, llm_type, llm_name)

+ self.mdl = TenantLLMService.model_instance(tenant_id, llm_type, llm_name, lang=lang)

assert self.mdl, "Can't find mole for {}/{}/{}".format(tenant_id, llm_type, llm_name)

def encode(self, texts: list, batch_size=32):

diff --git a/api/db/services/task_service.py b/api/db/services/task_service.py

index 87e84a12a..a63130150 100644

--- a/api/db/services/task_service.py

+++ b/api/db/services/task_service.py

@@ -27,7 +27,24 @@ class TaskService(CommonService):

@classmethod

@DB.connection_context()

def get_tasks(cls, tm, mod=0, comm=1, items_per_page=64):

- fields = [cls.model.id, cls.model.doc_id, cls.model.from_page,cls.model.to_page, Document.kb_id, Document.parser_id, Document.parser_config, Document.name, Document.type, Document.location, Document.size, Knowledgebase.tenant_id, Tenant.embd_id, Tenant.img2txt_id, Tenant.asr_id, cls.model.update_time]

+ fields = [

+ cls.model.id,

+ cls.model.doc_id,

+ cls.model.from_page,

+ cls.model.to_page,

+ Document.kb_id,

+ Document.parser_id,

+ Document.parser_config,

+ Document.name,

+ Document.type,

+ Document.location,

+ Document.size,

+ Knowledgebase.tenant_id,

+ Knowledgebase.language,

+ Tenant.embd_id,

+ Tenant.img2txt_id,

+ Tenant.asr_id,

+ cls.model.update_time]

docs = cls.model.select(*fields) \

.join(Document, on=(cls.model.doc_id == Document.id)) \

.join(Knowledgebase, on=(Document.kb_id == Knowledgebase.id)) \

@@ -42,7 +59,6 @@ class TaskService(CommonService):

.paginate(1, items_per_page)

return list(docs.dicts())

-

@classmethod

@DB.connection_context()

def do_cancel(cls, id):

@@ -54,12 +70,11 @@ class TaskService(CommonService):

pass

return True

-

@classmethod

@DB.connection_context()

def update_progress(cls, id, info):

- cls.model.update(progress_msg=cls.model.progress_msg + "\n"+info["progress_msg"]).where(

+ cls.model.update(progress_msg=cls.model.progress_msg + "\n" + info["progress_msg"]).where(

cls.model.id == id).execute()

if "progress" in info:

cls.model.update(progress=info["progress"]).where(

- cls.model.id == id).execute()

+ cls.model.id == id).execute()

diff --git a/api/utils/file_utils.py b/api/utils/file_utils.py

index b504cfef3..1d6f15f33 100644

--- a/api/utils/file_utils.py

+++ b/api/utils/file_utils.py

@@ -167,7 +167,11 @@ def thumbnail(filename, blob):

return "data:image/png;base64," + base64.b64encode(buffered.getvalue()).decode("utf-8")

if re.match(r".*\.(jpg|jpeg|png|tif|gif|icon|ico|webp)$", filename):

- return ("data:image/%s;base64,"%filename.split(".")[-1]) + base64.b64encode(Image.open(BytesIO(blob)).thumbnail((30, 30)).tobytes()).decode("utf-8")

+ image = Image.open(BytesIO(blob))

+ image.thumbnail((30, 30))

+ buffered = BytesIO()

+ image.save(buffered, format="png")

+ return "data:image/png;base64," + base64.b64encode(buffered.getvalue()).decode("utf-8")

if re.match(r".*\.(ppt|pptx)$", filename):

import aspose.slides as slides

diff --git a/deepdoc/README.md b/deepdoc/README.md

new file mode 100644

index 000000000..b2ad032dc

--- /dev/null

+++ b/deepdoc/README.md

@@ -0,0 +1,82 @@

+English | [简体中文](./README_zh.md)

+

+#*Deep*Doc

+

+---

+

+- [1. Introduction](#1)

+- [2. Vision](#2)

+- [3. Parser](#3)

+

+

+## 1. Introduction

+

+---

+With a bunch of documents from various domains with various formats and along with diverse retrieval requirements,

+an accurate analysis becomes a very challenge task. *Deep*Doc is born for that purpose.

+There 2 parts in *Deep*Doc so far: vision and parser.

+

+

+## 2. Vision

+

+---

+

+We use vision information to resolve problems as human being.

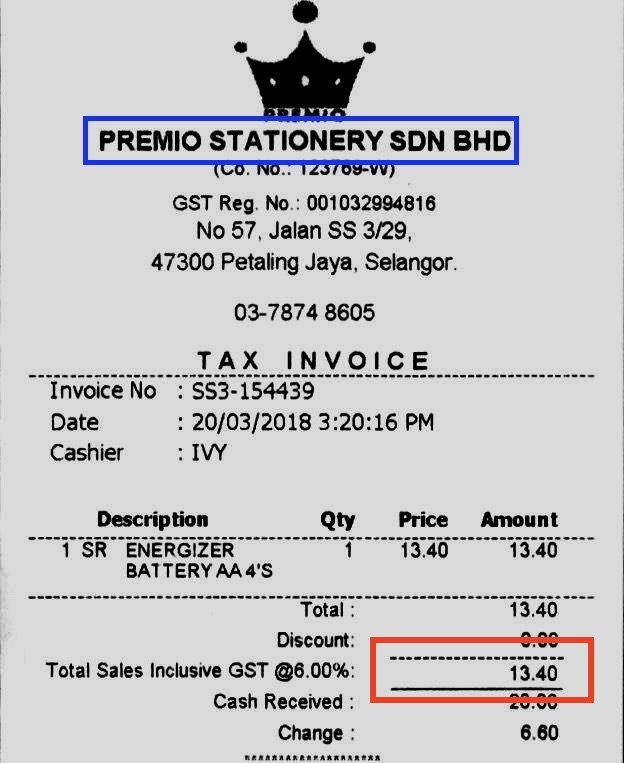

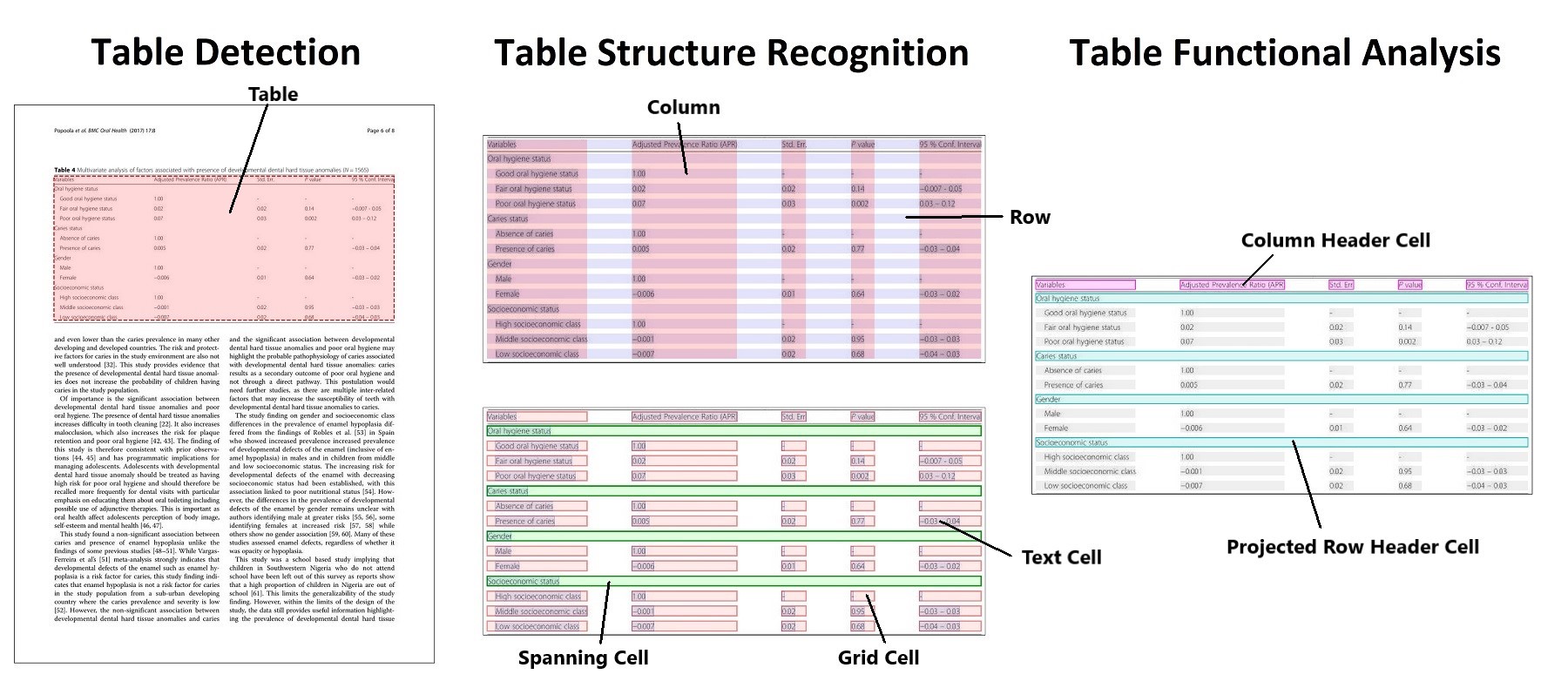

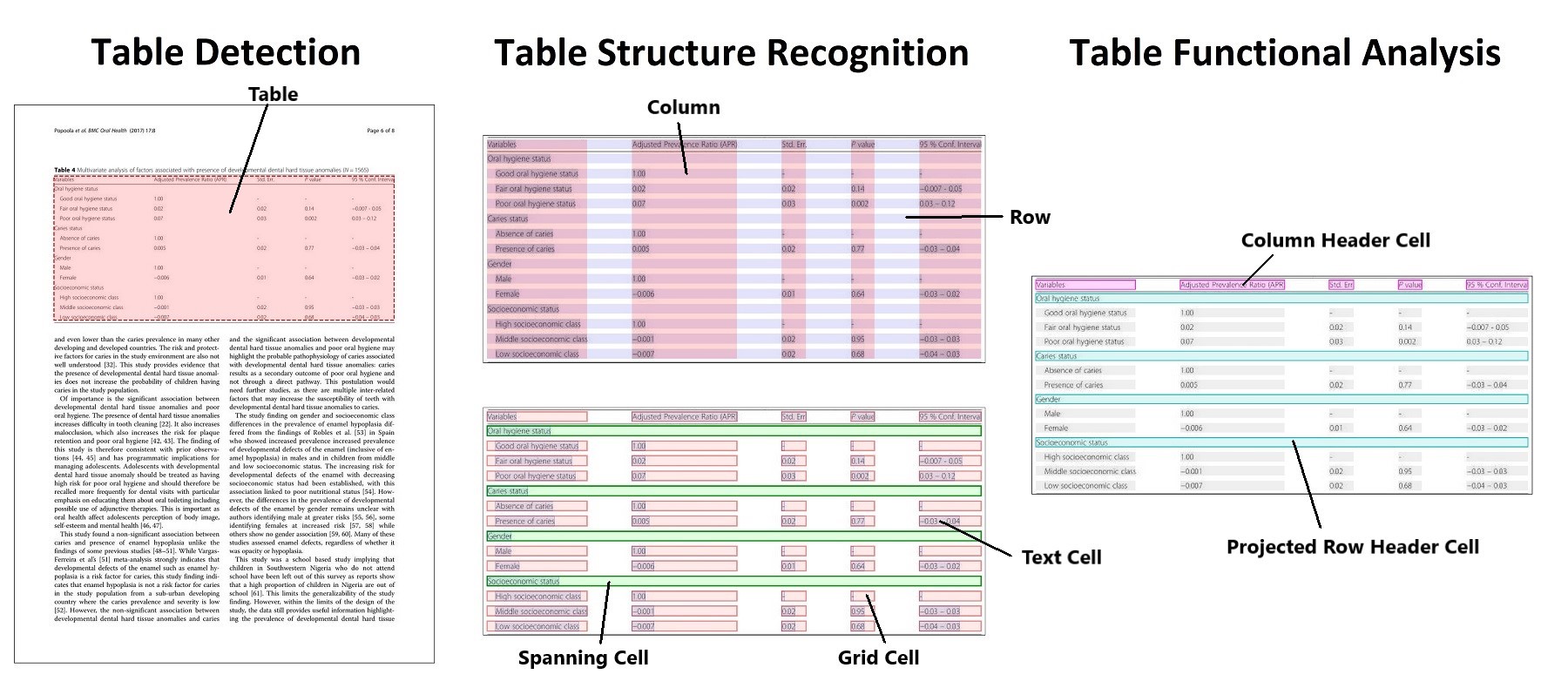

+ - OCR. Since a lot of documents presented as images or at least be able to transform to image,

+ OCR is a very essential and fundamental or even universal solution for text extraction.

+

+

+

+

+

+

+

+

+

+  +

+