Merge branch 'infiniflow:main' into feature/confluence-space-key

This commit is contained in:

commit

1ea48a36c1

9 changed files with 80 additions and 49 deletions

|

|

@ -39,7 +39,7 @@ async def upload(tenant_id):

|

||||||

Upload a file to the system.

|

Upload a file to the system.

|

||||||

---

|

---

|

||||||

tags:

|

tags:

|

||||||

- File Management

|

- File

|

||||||

security:

|

security:

|

||||||

- ApiKeyAuth: []

|

- ApiKeyAuth: []

|

||||||

parameters:

|

parameters:

|

||||||

|

|

@ -155,7 +155,7 @@ async def create(tenant_id):

|

||||||

Create a new file or folder.

|

Create a new file or folder.

|

||||||

---

|

---

|

||||||

tags:

|

tags:

|

||||||

- File Management

|

- File

|

||||||

security:

|

security:

|

||||||

- ApiKeyAuth: []

|

- ApiKeyAuth: []

|

||||||

parameters:

|

parameters:

|

||||||

|

|

@ -233,7 +233,7 @@ async def list_files(tenant_id):

|

||||||

List files under a specific folder.

|

List files under a specific folder.

|

||||||

---

|

---

|

||||||

tags:

|

tags:

|

||||||

- File Management

|

- File

|

||||||

security:

|

security:

|

||||||

- ApiKeyAuth: []

|

- ApiKeyAuth: []

|

||||||

parameters:

|

parameters:

|

||||||

|

|

@ -325,7 +325,7 @@ async def get_root_folder(tenant_id):

|

||||||

Get user's root folder.

|

Get user's root folder.

|

||||||

---

|

---

|

||||||

tags:

|

tags:

|

||||||

- File Management

|

- File

|

||||||

security:

|

security:

|

||||||

- ApiKeyAuth: []

|

- ApiKeyAuth: []

|

||||||

responses:

|

responses:

|

||||||

|

|

@ -361,7 +361,7 @@ async def get_parent_folder():

|

||||||

Get parent folder info of a file.

|

Get parent folder info of a file.

|

||||||

---

|

---

|

||||||

tags:

|

tags:

|

||||||

- File Management

|

- File

|

||||||

security:

|

security:

|

||||||

- ApiKeyAuth: []

|

- ApiKeyAuth: []

|

||||||

parameters:

|

parameters:

|

||||||

|

|

@ -406,7 +406,7 @@ async def get_all_parent_folders(tenant_id):

|

||||||

Get all parent folders of a file.

|

Get all parent folders of a file.

|

||||||

---

|

---

|

||||||

tags:

|

tags:

|

||||||

- File Management

|

- File

|

||||||

security:

|

security:

|

||||||

- ApiKeyAuth: []

|

- ApiKeyAuth: []

|

||||||

parameters:

|

parameters:

|

||||||

|

|

@ -454,7 +454,7 @@ async def rm(tenant_id):

|

||||||

Delete one or multiple files/folders.

|

Delete one or multiple files/folders.

|

||||||

---

|

---

|

||||||

tags:

|

tags:

|

||||||

- File Management

|

- File

|

||||||

security:

|

security:

|

||||||

- ApiKeyAuth: []

|

- ApiKeyAuth: []

|

||||||

parameters:

|

parameters:

|

||||||

|

|

@ -528,7 +528,7 @@ async def rename(tenant_id):

|

||||||

Rename a file.

|

Rename a file.

|

||||||

---

|

---

|

||||||

tags:

|

tags:

|

||||||

- File Management

|

- File

|

||||||

security:

|

security:

|

||||||

- ApiKeyAuth: []

|

- ApiKeyAuth: []

|

||||||

parameters:

|

parameters:

|

||||||

|

|

@ -589,7 +589,7 @@ async def get(tenant_id, file_id):

|

||||||

Download a file.

|

Download a file.

|

||||||

---

|

---

|

||||||

tags:

|

tags:

|

||||||

- File Management

|

- File

|

||||||

security:

|

security:

|

||||||

- ApiKeyAuth: []

|

- ApiKeyAuth: []

|

||||||

produces:

|

produces:

|

||||||

|

|

@ -637,7 +637,7 @@ async def move(tenant_id):

|

||||||

Move one or multiple files to another folder.

|

Move one or multiple files to another folder.

|

||||||

---

|

---

|

||||||

tags:

|

tags:

|

||||||

- File Management

|

- File

|

||||||

security:

|

security:

|

||||||

- ApiKeyAuth: []

|

- ApiKeyAuth: []

|

||||||

parameters:

|

parameters:

|

||||||

|

|

|

||||||

|

|

@ -63,6 +63,7 @@ class MinerUParser(RAGFlowPdfParser):

|

||||||

self.logger = logging.getLogger(self.__class__.__name__)

|

self.logger = logging.getLogger(self.__class__.__name__)

|

||||||

|

|

||||||

def _extract_zip_no_root(self, zip_path, extract_to, root_dir):

|

def _extract_zip_no_root(self, zip_path, extract_to, root_dir):

|

||||||

|

self.logger.info(f"[MinerU] Extract zip: zip_path={zip_path}, extract_to={extract_to}, root_hint={root_dir}")

|

||||||

with zipfile.ZipFile(zip_path, "r") as zip_ref:

|

with zipfile.ZipFile(zip_path, "r") as zip_ref:

|

||||||

if not root_dir:

|

if not root_dir:

|

||||||

files = zip_ref.namelist()

|

files = zip_ref.namelist()

|

||||||

|

|

@ -72,7 +73,7 @@ class MinerUParser(RAGFlowPdfParser):

|

||||||

root_dir = None

|

root_dir = None

|

||||||

|

|

||||||

if not root_dir or not root_dir.endswith("/"):

|

if not root_dir or not root_dir.endswith("/"):

|

||||||

self.logger.info(f"[MinerU] No root directory found, extracting all...fff{root_dir}")

|

self.logger.info(f"[MinerU] No root directory found, extracting all (root_hint={root_dir})")

|

||||||

zip_ref.extractall(extract_to)

|

zip_ref.extractall(extract_to)

|

||||||

return

|

return

|

||||||

|

|

||||||

|

|

@ -108,7 +109,7 @@ class MinerUParser(RAGFlowPdfParser):

|

||||||

valid_backends = ["pipeline", "vlm-http-client", "vlm-transformers", "vlm-vllm-engine"]

|

valid_backends = ["pipeline", "vlm-http-client", "vlm-transformers", "vlm-vllm-engine"]

|

||||||

if backend not in valid_backends:

|

if backend not in valid_backends:

|

||||||

reason = "[MinerU] Invalid backend '{backend}'. Valid backends are: {valid_backends}"

|

reason = "[MinerU] Invalid backend '{backend}'. Valid backends are: {valid_backends}"

|

||||||

logging.warning(reason)

|

self.logger.warning(reason)

|

||||||

return False, reason

|

return False, reason

|

||||||

|

|

||||||

subprocess_kwargs = {

|

subprocess_kwargs = {

|

||||||

|

|

@ -128,40 +129,40 @@ class MinerUParser(RAGFlowPdfParser):

|

||||||

if backend == "vlm-http-client" and server_url:

|

if backend == "vlm-http-client" and server_url:

|

||||||

try:

|

try:

|

||||||

server_accessible = self._is_http_endpoint_valid(server_url + "/openapi.json")

|

server_accessible = self._is_http_endpoint_valid(server_url + "/openapi.json")

|

||||||

logging.info(f"[MinerU] vlm-http-client server check: {server_accessible}")

|

self.logger.info(f"[MinerU] vlm-http-client server check: {server_accessible}")

|

||||||

if server_accessible:

|

if server_accessible:

|

||||||

self.using_api = False # We are using http client, not API

|

self.using_api = False # We are using http client, not API

|

||||||

return True, reason

|

return True, reason

|

||||||

else:

|

else:

|

||||||

reason = f"[MinerU] vlm-http-client server not accessible: {server_url}"

|

reason = f"[MinerU] vlm-http-client server not accessible: {server_url}"

|

||||||

logging.warning(f"[MinerU] vlm-http-client server not accessible: {server_url}")

|

self.logger.warning(f"[MinerU] vlm-http-client server not accessible: {server_url}")

|

||||||

return False, reason

|

return False, reason

|

||||||

except Exception as e:

|

except Exception as e:

|

||||||

logging.warning(f"[MinerU] vlm-http-client server check failed: {e}")

|

self.logger.warning(f"[MinerU] vlm-http-client server check failed: {e}")

|

||||||

try:

|

try:

|

||||||

response = requests.get(server_url, timeout=5)

|

response = requests.get(server_url, timeout=5)

|

||||||

logging.info(f"[MinerU] vlm-http-client server connection check: success with status {response.status_code}")

|

self.logger.info(f"[MinerU] vlm-http-client server connection check: success with status {response.status_code}")

|

||||||

self.using_api = False

|

self.using_api = False

|

||||||

return True, reason

|

return True, reason

|

||||||

except Exception as e:

|

except Exception as e:

|

||||||

reason = f"[MinerU] vlm-http-client server connection check failed: {server_url}: {e}"

|

reason = f"[MinerU] vlm-http-client server connection check failed: {server_url}: {e}"

|

||||||

logging.warning(f"[MinerU] vlm-http-client server connection check failed: {server_url}: {e}")

|

self.logger.warning(f"[MinerU] vlm-http-client server connection check failed: {server_url}: {e}")

|

||||||

return False, reason

|

return False, reason

|

||||||

|

|

||||||

try:

|

try:

|

||||||

result = subprocess.run([str(self.mineru_path), "--version"], **subprocess_kwargs)

|

result = subprocess.run([str(self.mineru_path), "--version"], **subprocess_kwargs)

|

||||||

version_info = result.stdout.strip()

|

version_info = result.stdout.strip()

|

||||||

if version_info:

|

if version_info:

|

||||||

logging.info(f"[MinerU] Detected version: {version_info}")

|

self.logger.info(f"[MinerU] Detected version: {version_info}")

|

||||||

else:

|

else:

|

||||||

logging.info("[MinerU] Detected MinerU, but version info is empty.")

|

self.logger.info("[MinerU] Detected MinerU, but version info is empty.")

|

||||||

return True, reason

|

return True, reason

|

||||||

except subprocess.CalledProcessError as e:

|

except subprocess.CalledProcessError as e:

|

||||||

logging.warning(f"[MinerU] Execution failed (exit code {e.returncode}).")

|

self.logger.warning(f"[MinerU] Execution failed (exit code {e.returncode}).")

|

||||||

except FileNotFoundError:

|

except FileNotFoundError:

|

||||||

logging.warning("[MinerU] MinerU not found. Please install it via: pip install -U 'mineru[core]'")

|

self.logger.warning("[MinerU] MinerU not found. Please install it via: pip install -U 'mineru[core]'")

|

||||||

except Exception as e:

|

except Exception as e:

|

||||||

logging.error(f"[MinerU] Unexpected error during installation check: {e}")

|

self.logger.error(f"[MinerU] Unexpected error during installation check: {e}")

|

||||||

|

|

||||||

# If executable check fails, try API check

|

# If executable check fails, try API check

|

||||||

try:

|

try:

|

||||||

|

|

@ -171,14 +172,14 @@ class MinerUParser(RAGFlowPdfParser):

|

||||||

if not openapi_exists:

|

if not openapi_exists:

|

||||||

reason = "[MinerU] Failed to detect vaild MinerU API server"

|

reason = "[MinerU] Failed to detect vaild MinerU API server"

|

||||||

return openapi_exists, reason

|

return openapi_exists, reason

|

||||||

logging.info(f"[MinerU] Detected {self.mineru_api}/openapi.json: {openapi_exists}")

|

self.logger.info(f"[MinerU] Detected {self.mineru_api}/openapi.json: {openapi_exists}")

|

||||||

self.using_api = openapi_exists

|

self.using_api = openapi_exists

|

||||||

return openapi_exists, reason

|

return openapi_exists, reason

|

||||||

else:

|

else:

|

||||||

logging.info("[MinerU] api not exists.")

|

self.logger.info("[MinerU] api not exists.")

|

||||||

except Exception as e:

|

except Exception as e:

|

||||||

reason = f"[MinerU] Unexpected error during api check: {e}"

|

reason = f"[MinerU] Unexpected error during api check: {e}"

|

||||||

logging.error(f"[MinerU] Unexpected error during api check: {e}")

|

self.logger.error(f"[MinerU] Unexpected error during api check: {e}")

|

||||||

return False, reason

|

return False, reason

|

||||||

|

|

||||||

def _run_mineru(

|

def _run_mineru(

|

||||||

|

|

@ -314,7 +315,7 @@ class MinerUParser(RAGFlowPdfParser):

|

||||||

except Exception as e:

|

except Exception as e:

|

||||||

self.page_images = None

|

self.page_images = None

|

||||||

self.total_page = 0

|

self.total_page = 0

|

||||||

logging.exception(e)

|

self.logger.exception(e)

|

||||||

|

|

||||||

def _line_tag(self, bx):

|

def _line_tag(self, bx):

|

||||||

pn = [bx["page_idx"] + 1]

|

pn = [bx["page_idx"] + 1]

|

||||||

|

|

@ -480,15 +481,49 @@ class MinerUParser(RAGFlowPdfParser):

|

||||||

|

|

||||||

json_file = None

|

json_file = None

|

||||||

subdir = None

|

subdir = None

|

||||||

|

attempted = []

|

||||||

|

|

||||||

|

# mirror MinerU's sanitize_filename to align ZIP naming

|

||||||

|

def _sanitize_filename(name: str) -> str:

|

||||||

|

sanitized = re.sub(r"[/\\\.]{2,}|[/\\]", "", name)

|

||||||

|

sanitized = re.sub(r"[^\w.-]", "_", sanitized, flags=re.UNICODE)

|

||||||

|

if sanitized.startswith("."):

|

||||||

|

sanitized = "_" + sanitized[1:]

|

||||||

|

return sanitized or "unnamed"

|

||||||

|

|

||||||

|

safe_stem = _sanitize_filename(file_stem)

|

||||||

|

allowed_names = {f"{file_stem}_content_list.json", f"{safe_stem}_content_list.json"}

|

||||||

|

self.logger.info(f"[MinerU] Expected output files: {', '.join(sorted(allowed_names))}")

|

||||||

|

self.logger.info(f"[MinerU] Searching output candidates: {', '.join(str(c) for c in candidates)}")

|

||||||

|

|

||||||

for sub in candidates:

|

for sub in candidates:

|

||||||

jf = sub / f"{file_stem}_content_list.json"

|

jf = sub / f"{file_stem}_content_list.json"

|

||||||

|

self.logger.info(f"[MinerU] Trying original path: {jf}")

|

||||||

|

attempted.append(jf)

|

||||||

if jf.exists():

|

if jf.exists():

|

||||||

subdir = sub

|

subdir = sub

|

||||||

json_file = jf

|

json_file = jf

|

||||||

break

|

break

|

||||||

|

|

||||||

|

# MinerU API sanitizes non-ASCII filenames inside the ZIP root and file names.

|

||||||

|

alt = sub / f"{safe_stem}_content_list.json"

|

||||||

|

self.logger.info(f"[MinerU] Trying sanitized filename: {alt}")

|

||||||

|

attempted.append(alt)

|

||||||

|

if alt.exists():

|

||||||

|

subdir = sub

|

||||||

|

json_file = alt

|

||||||

|

break

|

||||||

|

|

||||||

|

nested_alt = sub / safe_stem / f"{safe_stem}_content_list.json"

|

||||||

|

self.logger.info(f"[MinerU] Trying sanitized nested path: {nested_alt}")

|

||||||

|

attempted.append(nested_alt)

|

||||||

|

if nested_alt.exists():

|

||||||

|

subdir = nested_alt.parent

|

||||||

|

json_file = nested_alt

|

||||||

|

break

|

||||||

|

|

||||||

if not json_file:

|

if not json_file:

|

||||||

raise FileNotFoundError(f"[MinerU] Missing output file, tried: {', '.join(str(c / (file_stem + '_content_list.json')) for c in candidates)}")

|

raise FileNotFoundError(f"[MinerU] Missing output file, tried: {', '.join(str(p) for p in attempted)}")

|

||||||

|

|

||||||

with open(json_file, "r", encoding="utf-8") as f:

|

with open(json_file, "r", encoding="utf-8") as f:

|

||||||

data = json.load(f)

|

data = json.load(f)

|

||||||

|

|

|

||||||

|

|

@ -170,7 +170,7 @@ TZ=Asia/Shanghai

|

||||||

# Uncomment the following line if your operating system is MacOS:

|

# Uncomment the following line if your operating system is MacOS:

|

||||||

# MACOS=1

|

# MACOS=1

|

||||||

|

|

||||||

# The maximum file size limit (in bytes) for each upload to your knowledge base or File Management.

|

# The maximum file size limit (in bytes) for each upload to your dataset or RAGFlow's File system.

|

||||||

# To change the 1GB file size limit, uncomment the line below and update as needed.

|

# To change the 1GB file size limit, uncomment the line below and update as needed.

|

||||||

# MAX_CONTENT_LENGTH=1073741824

|

# MAX_CONTENT_LENGTH=1073741824

|

||||||

# After updating, ensure `client_max_body_size` in nginx/nginx.conf is updated accordingly.

|

# After updating, ensure `client_max_body_size` in nginx/nginx.conf is updated accordingly.

|

||||||

|

|

|

||||||

|

|

@ -76,5 +76,5 @@ No. Files uploaded to an agent as input are not stored in a dataset and hence wi

|

||||||

There is no _specific_ file size limit for a file uploaded to an agent. However, note that model providers typically have a default or explicit maximum token setting, which can range from 8196 to 128k: The plain text part of the uploaded file will be passed in as the key value, but if the file's token count exceeds this limit, the string will be truncated and incomplete.

|

There is no _specific_ file size limit for a file uploaded to an agent. However, note that model providers typically have a default or explicit maximum token setting, which can range from 8196 to 128k: The plain text part of the uploaded file will be passed in as the key value, but if the file's token count exceeds this limit, the string will be truncated and incomplete.

|

||||||

|

|

||||||

:::tip NOTE

|

:::tip NOTE

|

||||||

The variables `MAX_CONTENT_LENGTH` in `/docker/.env` and `client_max_body_size` in `/docker/nginx/nginx.conf` set the file size limit for each upload to a dataset or **File Management**. These settings DO NOT apply in this scenario.

|

The variables `MAX_CONTENT_LENGTH` in `/docker/.env` and `client_max_body_size` in `/docker/nginx/nginx.conf` set the file size limit for each upload to a dataset or RAGFlow's File system. These settings DO NOT apply in this scenario.

|

||||||

:::

|

:::

|

||||||

|

|

|

||||||

|

|

@ -9,7 +9,7 @@ Initiate an AI-powered chat with a configured chat assistant.

|

||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

Knowledge base, hallucination-free chat, and file management are the three pillars of RAGFlow. Chats in RAGFlow are based on a particular dataset or multiple datasets. Once you have created your dataset, finished file parsing, and [run a retrieval test](../dataset/run_retrieval_test.md), you can go ahead and start an AI conversation.

|

Chats in RAGFlow are based on a particular dataset or multiple datasets. Once you have created your dataset, finished file parsing, and [run a retrieval test](../dataset/run_retrieval_test.md), you can go ahead and start an AI conversation.

|

||||||

|

|

||||||

## Start an AI chat

|

## Start an AI chat

|

||||||

|

|

||||||

|

|

|

||||||

|

|

@ -5,7 +5,7 @@ slug: /configure_knowledge_base

|

||||||

|

|

||||||

# Configure dataset

|

# Configure dataset

|

||||||

|

|

||||||

Most of RAGFlow's chat assistants and Agents are based on datasets. Each of RAGFlow's datasets serves as a knowledge source, *parsing* files uploaded from your local machine and file references generated in **File Management** into the real 'knowledge' for future AI chats. This guide demonstrates some basic usages of the dataset feature, covering the following topics:

|

Most of RAGFlow's chat assistants and Agents are based on datasets. Each of RAGFlow's datasets serves as a knowledge source, *parsing* files uploaded from your local machine and file references generated in RAGFlow's File system into the real 'knowledge' for future AI chats. This guide demonstrates some basic usages of the dataset feature, covering the following topics:

|

||||||

|

|

||||||

- Create a dataset

|

- Create a dataset

|

||||||

- Configure a dataset

|

- Configure a dataset

|

||||||

|

|

@ -82,10 +82,10 @@ Some embedding models are optimized for specific languages, so performance may b

|

||||||

|

|

||||||

### Upload file

|

### Upload file

|

||||||

|

|

||||||

- RAGFlow's **File Management** allows you to link a file to multiple datasets, in which case each target dataset holds a reference to the file.

|

- RAGFlow's File system allows you to link a file to multiple datasets, in which case each target dataset holds a reference to the file.

|

||||||

- In **Knowledge Base**, you are also given the option of uploading a single file or a folder of files (bulk upload) from your local machine to a dataset, in which case the dataset holds file copies.

|

- In **Knowledge Base**, you are also given the option of uploading a single file or a folder of files (bulk upload) from your local machine to a dataset, in which case the dataset holds file copies.

|

||||||

|

|

||||||

While uploading files directly to a dataset seems more convenient, we *highly* recommend uploading files to **File Management** and then linking them to the target datasets. This way, you can avoid permanently deleting files uploaded to the dataset.

|

While uploading files directly to a dataset seems more convenient, we *highly* recommend uploading files to RAGFlow's File system and then linking them to the target datasets. This way, you can avoid permanently deleting files uploaded to the dataset.

|

||||||

|

|

||||||

### Parse file

|

### Parse file

|

||||||

|

|

||||||

|

|

@ -142,6 +142,6 @@ As of RAGFlow v0.22.1, the search feature is still in a rudimentary form, suppor

|

||||||

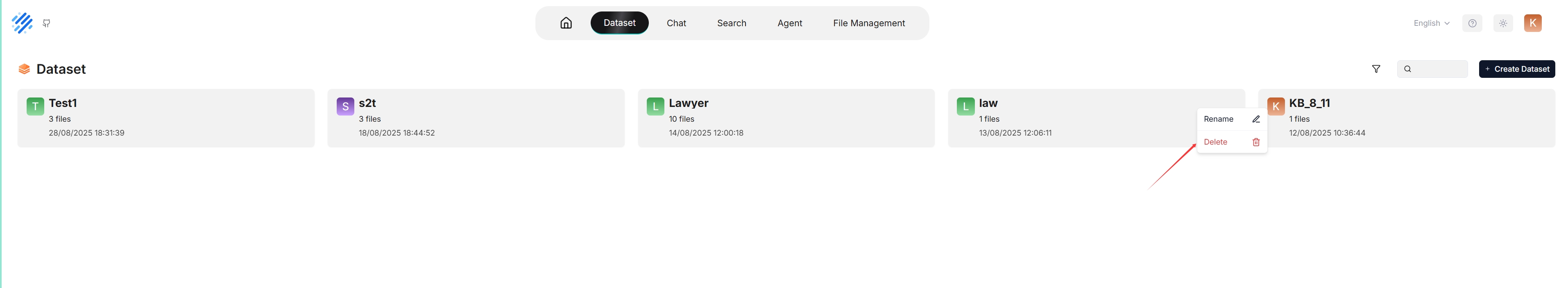

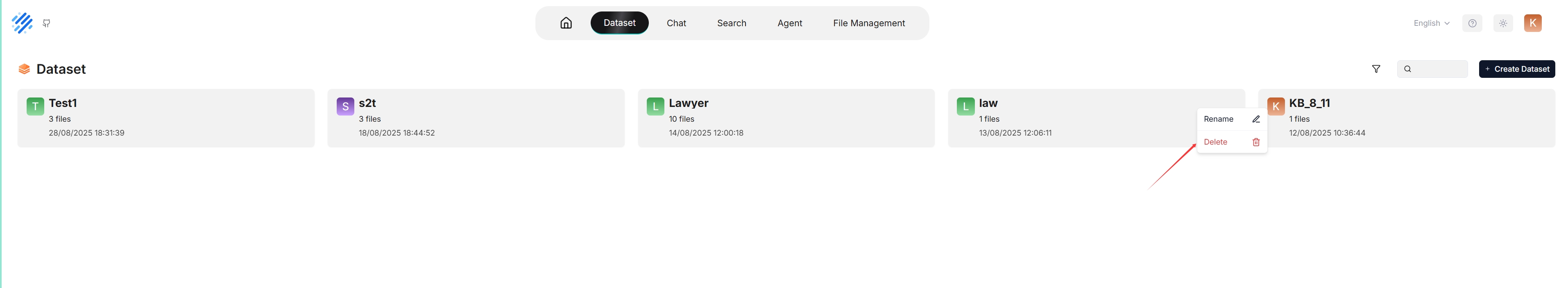

You are allowed to delete a dataset. Hover your mouse over the three dot of the intended dataset card and the **Delete** option appears. Once you delete a dataset, the associated folder under **root/.knowledge** directory is AUTOMATICALLY REMOVED. The consequence is:

|

You are allowed to delete a dataset. Hover your mouse over the three dot of the intended dataset card and the **Delete** option appears. Once you delete a dataset, the associated folder under **root/.knowledge** directory is AUTOMATICALLY REMOVED. The consequence is:

|

||||||

|

|

||||||

- The files uploaded directly to the dataset are gone;

|

- The files uploaded directly to the dataset are gone;

|

||||||

- The file references, which you created from within **File Management**, are gone, but the associated files still exist in **File Management**.

|

- The file references, which you created from within RAGFlow's File system, are gone, but the associated files still exist.

|

||||||

|

|

||||||

|

|

||||||

|

|

|

||||||

|

|

@ -57,7 +57,7 @@ async def run_graphrag(

|

||||||

start = trio.current_time()

|

start = trio.current_time()

|

||||||

tenant_id, kb_id, doc_id = row["tenant_id"], str(row["kb_id"]), row["doc_id"]

|

tenant_id, kb_id, doc_id = row["tenant_id"], str(row["kb_id"]), row["doc_id"]

|

||||||

chunks = []

|

chunks = []

|

||||||

for d in settings.retriever.chunk_list(doc_id, tenant_id, [kb_id], fields=["content_with_weight", "doc_id"], sort_by_position=True):

|

for d in settings.retriever.chunk_list(doc_id, tenant_id, [kb_id], max_count=10000, fields=["content_with_weight", "doc_id"], sort_by_position=True):

|

||||||

chunks.append(d["content_with_weight"])

|

chunks.append(d["content_with_weight"])

|

||||||

|

|

||||||

with trio.fail_after(max(120, len(chunks) * 60 * 10) if enable_timeout_assertion else 10000000000):

|

with trio.fail_after(max(120, len(chunks) * 60 * 10) if enable_timeout_assertion else 10000000000):

|

||||||

|

|

@ -174,13 +174,19 @@ async def run_graphrag_for_kb(

|

||||||

chunks = []

|

chunks = []

|

||||||

current_chunk = ""

|

current_chunk = ""

|

||||||

|

|

||||||

for d in settings.retriever.chunk_list(

|

# DEBUG: Obtener todos los chunks primero

|

||||||

|

raw_chunks = list(settings.retriever.chunk_list(

|

||||||

doc_id,

|

doc_id,

|

||||||

tenant_id,

|

tenant_id,

|

||||||

[kb_id],

|

[kb_id],

|

||||||

|

max_count=10000, # FIX: Aumentar límite para procesar todos los chunks

|

||||||

fields=fields_for_chunks,

|

fields=fields_for_chunks,

|

||||||

sort_by_position=True,

|

sort_by_position=True,

|

||||||

):

|

))

|

||||||

|

|

||||||

|

callback(msg=f"[DEBUG] chunk_list() returned {len(raw_chunks)} raw chunks for doc {doc_id}")

|

||||||

|

|

||||||

|

for d in raw_chunks:

|

||||||

content = d["content_with_weight"]

|

content = d["content_with_weight"]

|

||||||

if num_tokens_from_string(current_chunk + content) < 1024:

|

if num_tokens_from_string(current_chunk + content) < 1024:

|

||||||

current_chunk += content

|

current_chunk += content

|

||||||

|

|

|

||||||

|

|

@ -537,7 +537,8 @@ class Dealer:

|

||||||

doc["id"] = id

|

doc["id"] = id

|

||||||

if dict_chunks:

|

if dict_chunks:

|

||||||

res.extend(dict_chunks.values())

|

res.extend(dict_chunks.values())

|

||||||

if len(dict_chunks.values()) < bs:

|

# FIX: Solo terminar si no hay chunks, no si hay menos de bs

|

||||||

|

if len(dict_chunks.values()) == 0:

|

||||||

break

|

break

|

||||||

return res

|

return res

|

||||||

|

|

||||||

|

|

|

||||||

|

|

@ -1905,16 +1905,5 @@ Tokenizer 会根据所选方式将内容存储为对应的数据结构。`,

|

||||||

searchTitle: '尚未创建搜索应用',

|

searchTitle: '尚未创建搜索应用',

|

||||||

addNow: '立即添加',

|

addNow: '立即添加',

|

||||||

},

|

},

|

||||||

|

|

||||||

deleteModal: {

|

|

||||||

delAgent: '删除智能体',

|

|

||||||

delDataset: '删除知识库',

|

|

||||||

delSearch: '删除搜索',

|

|

||||||

delFile: '删除文件',

|

|

||||||

delFiles: '删除文件',

|

|

||||||

delFilesContent: '已选择 {{count}} 个文件',

|

|

||||||

delChat: '删除聊天',

|

|

||||||

delMember: '删除成员',

|

|

||||||

},

|

|

||||||

},

|

},

|

||||||

};

|

};

|

||||||

|

|

|

||||||

Loading…

Add table

Reference in a new issue