Merge branch 'main' of github.com:infiniflow/ragflow into feature/1203

This commit is contained in:

commit

14e7007745

39 changed files with 210 additions and 147 deletions

|

|

@ -478,7 +478,7 @@ class Canvas(Graph):

|

|||

})

|

||||

await _run_batch(idx, to)

|

||||

to = len(self.path)

|

||||

# post processing of components invocation

|

||||

# post-processing of components invocation

|

||||

for i in range(idx, to):

|

||||

cpn = self.get_component(self.path[i])

|

||||

cpn_obj = self.get_component_obj(self.path[i])

|

||||

|

|

|

|||

|

|

@ -193,7 +193,7 @@

|

|||

"presence_penalty": 0.4,

|

||||

"prompts": [

|

||||

{

|

||||

"content": "Text Content:\n{Splitter:KindDingosJam@chunks}\n",

|

||||

"content": "Text Content:\n{Splitter:NineTiesSin@chunks}\n",

|

||||

"role": "user"

|

||||

}

|

||||

],

|

||||

|

|

@ -226,7 +226,7 @@

|

|||

"presence_penalty": 0.4,

|

||||

"prompts": [

|

||||

{

|

||||

"content": "Text Content:\n\n{Splitter:KindDingosJam@chunks}\n",

|

||||

"content": "Text Content:\n\n{Splitter:TastyPointsLay@chunks}\n",

|

||||

"role": "user"

|

||||

}

|

||||

],

|

||||

|

|

@ -259,7 +259,7 @@

|

|||

"presence_penalty": 0.4,

|

||||

"prompts": [

|

||||

{

|

||||

"content": "Content: \n\n{Splitter:KindDingosJam@chunks}",

|

||||

"content": "Content: \n\n{Splitter:CuteBusesBet@chunks}",

|

||||

"role": "user"

|

||||

}

|

||||

],

|

||||

|

|

@ -485,7 +485,7 @@

|

|||

"outputs": {},

|

||||

"presencePenaltyEnabled": false,

|

||||

"presence_penalty": 0.4,

|

||||

"prompts": "Text Content:\n{Splitter:KindDingosJam@chunks}\n",

|

||||

"prompts": "Text Content:\n{Splitter:NineTiesSin@chunks}\n",

|

||||

"sys_prompt": "Role\nYou are a text analyzer.\n\nTask\nExtract the most important keywords/phrases of a given piece of text content.\n\nRequirements\n- Summarize the text content, and give the top 5 important keywords/phrases.\n- The keywords MUST be in the same language as the given piece of text content.\n- The keywords are delimited by ENGLISH COMMA.\n- Output keywords ONLY.",

|

||||

"temperature": 0.1,

|

||||

"temperatureEnabled": false,

|

||||

|

|

@ -522,7 +522,7 @@

|

|||

"outputs": {},

|

||||

"presencePenaltyEnabled": false,

|

||||

"presence_penalty": 0.4,

|

||||

"prompts": "Text Content:\n\n{Splitter:KindDingosJam@chunks}\n",

|

||||

"prompts": "Text Content:\n\n{Splitter:TastyPointsLay@chunks}\n",

|

||||

"sys_prompt": "Role\nYou are a text analyzer.\n\nTask\nPropose 3 questions about a given piece of text content.\n\nRequirements\n- Understand and summarize the text content, and propose the top 3 important questions.\n- The questions SHOULD NOT have overlapping meanings.\n- The questions SHOULD cover the main content of the text as much as possible.\n- The questions MUST be in the same language as the given piece of text content.\n- One question per line.\n- Output questions ONLY.",

|

||||

"temperature": 0.1,

|

||||

"temperatureEnabled": false,

|

||||

|

|

@ -559,7 +559,7 @@

|

|||

"outputs": {},

|

||||

"presencePenaltyEnabled": false,

|

||||

"presence_penalty": 0.4,

|

||||

"prompts": "Content: \n\n{Splitter:KindDingosJam@chunks}",

|

||||

"prompts": "Content: \n\n{Splitter:BlueResultsWink@chunks}",

|

||||

"sys_prompt": "Extract important structured information from the given content. Output ONLY a valid JSON string with no additional text. If no important structured information is found, output an empty JSON object: {}.\n\nImportant structured information may include: names, dates, locations, events, key facts, numerical data, or other extractable entities.",

|

||||

"temperature": 0.1,

|

||||

"temperatureEnabled": false,

|

||||

|

|

|

|||

|

|

@ -75,7 +75,7 @@ class YahooFinance(ToolBase, ABC):

|

|||

@timeout(int(os.environ.get("COMPONENT_EXEC_TIMEOUT", 60)))

|

||||

def _invoke(self, **kwargs):

|

||||

if self.check_if_canceled("YahooFinance processing"):

|

||||

return

|

||||

return None

|

||||

|

||||

if not kwargs.get("stock_code"):

|

||||

self.set_output("report", "")

|

||||

|

|

@ -84,33 +84,33 @@ class YahooFinance(ToolBase, ABC):

|

|||

last_e = ""

|

||||

for _ in range(self._param.max_retries+1):

|

||||

if self.check_if_canceled("YahooFinance processing"):

|

||||

return

|

||||

return None

|

||||

|

||||

yohoo_res = []

|

||||

yahoo_res = []

|

||||

try:

|

||||

msft = yf.Ticker(kwargs["stock_code"])

|

||||

if self.check_if_canceled("YahooFinance processing"):

|

||||

return

|

||||

return None

|

||||

|

||||

if self._param.info:

|

||||

yohoo_res.append("# Information:\n" + pd.Series(msft.info).to_markdown() + "\n")

|

||||

yahoo_res.append("# Information:\n" + pd.Series(msft.info).to_markdown() + "\n")

|

||||

if self._param.history:

|

||||

yohoo_res.append("# History:\n" + msft.history().to_markdown() + "\n")

|

||||

yahoo_res.append("# History:\n" + msft.history().to_markdown() + "\n")

|

||||

if self._param.financials:

|

||||

yohoo_res.append("# Calendar:\n" + pd.DataFrame(msft.calendar).to_markdown() + "\n")

|

||||

yahoo_res.append("# Calendar:\n" + pd.DataFrame(msft.calendar).to_markdown() + "\n")

|

||||

if self._param.balance_sheet:

|

||||

yohoo_res.append("# Balance sheet:\n" + msft.balance_sheet.to_markdown() + "\n")

|

||||

yohoo_res.append("# Quarterly balance sheet:\n" + msft.quarterly_balance_sheet.to_markdown() + "\n")

|

||||

yahoo_res.append("# Balance sheet:\n" + msft.balance_sheet.to_markdown() + "\n")

|

||||

yahoo_res.append("# Quarterly balance sheet:\n" + msft.quarterly_balance_sheet.to_markdown() + "\n")

|

||||

if self._param.cash_flow_statement:

|

||||

yohoo_res.append("# Cash flow statement:\n" + msft.cashflow.to_markdown() + "\n")

|

||||

yohoo_res.append("# Quarterly cash flow statement:\n" + msft.quarterly_cashflow.to_markdown() + "\n")

|

||||

yahoo_res.append("# Cash flow statement:\n" + msft.cashflow.to_markdown() + "\n")

|

||||

yahoo_res.append("# Quarterly cash flow statement:\n" + msft.quarterly_cashflow.to_markdown() + "\n")

|

||||

if self._param.news:

|

||||

yohoo_res.append("# News:\n" + pd.DataFrame(msft.news).to_markdown() + "\n")

|

||||

self.set_output("report", "\n\n".join(yohoo_res))

|

||||

yahoo_res.append("# News:\n" + pd.DataFrame(msft.news).to_markdown() + "\n")

|

||||

self.set_output("report", "\n\n".join(yahoo_res))

|

||||

return self.output("report")

|

||||

except Exception as e:

|

||||

if self.check_if_canceled("YahooFinance processing"):

|

||||

return

|

||||

return None

|

||||

|

||||

last_e = e

|

||||

logging.exception(f"YahooFinance error: {e}")

|

||||

|

|

|

|||

|

|

@ -180,7 +180,7 @@ def login_user(user, remember=False, duration=None, force=False, fresh=True):

|

|||

user's `is_active` property is ``False``, they will not be logged in

|

||||

unless `force` is ``True``.

|

||||

|

||||

This will return ``True`` if the log in attempt succeeds, and ``False`` if

|

||||

This will return ``True`` if the login attempt succeeds, and ``False`` if

|

||||

it fails (i.e. because the user is inactive).

|

||||

|

||||

:param user: The user object to log in.

|

||||

|

|

|

|||

|

|

@ -552,7 +552,7 @@ def list_docs(dataset_id, tenant_id):

|

|||

create_time_from = int(q.get("create_time_from", 0))

|

||||

create_time_to = int(q.get("create_time_to", 0))

|

||||

|

||||

# map run status (accept text or numeric) - align with API parameter

|

||||

# map run status (text or numeric) - align with API parameter

|

||||

run_status_text_to_numeric = {"UNSTART": "0", "RUNNING": "1", "CANCEL": "2", "DONE": "3", "FAIL": "4"}

|

||||

run_status_converted = [run_status_text_to_numeric.get(v, v) for v in run_status]

|

||||

|

||||

|

|

@ -890,7 +890,7 @@ def list_chunks(tenant_id, dataset_id, document_id):

|

|||

type: string

|

||||

required: false

|

||||

default: ""

|

||||

description: Chunk Id.

|

||||

description: Chunk id.

|

||||

- in: header

|

||||

name: Authorization

|

||||

type: string

|

||||

|

|

|

|||

|

|

@ -126,7 +126,7 @@ class OnyxConfluence:

|

|||

def _renew_credentials(self) -> tuple[dict[str, Any], bool]:

|

||||

"""credential_json - the current json credentials

|

||||

Returns a tuple

|

||||

1. The up to date credentials

|

||||

1. The up-to-date credentials

|

||||

2. True if the credentials were updated

|

||||

|

||||

This method is intended to be used within a distributed lock.

|

||||

|

|

@ -179,8 +179,8 @@ class OnyxConfluence:

|

|||

credential_json["confluence_refresh_token"],

|

||||

)

|

||||

|

||||

# store the new credentials to redis and to the db thru the provider

|

||||

# redis: we use a 5 min TTL because we are given a 10 minute grace period

|

||||

# store the new credentials to redis and to the db through the provider

|

||||

# redis: we use a 5 min TTL because we are given a 10 minutes grace period

|

||||

# when keys are rotated. it's easier to expire the cached credentials

|

||||

# reasonably frequently rather than trying to handle strong synchronization

|

||||

# between the db and redis everywhere the credentials might be updated

|

||||

|

|

@ -690,7 +690,7 @@ class OnyxConfluence:

|

|||

) -> Iterator[dict[str, Any]]:

|

||||

"""

|

||||

This function will paginate through the top level query first, then

|

||||

paginate through all of the expansions.

|

||||

paginate through all the expansions.

|

||||

"""

|

||||

|

||||

def _traverse_and_update(data: dict | list) -> None:

|

||||

|

|

@ -863,7 +863,7 @@ def get_user_email_from_username__server(

|

|||

# For now, we'll just return None and log a warning. This means

|

||||

# we will keep retrying to get the email every group sync.

|

||||

email = None

|

||||

# We may want to just return a string that indicates failure so we dont

|

||||

# We may want to just return a string that indicates failure so we don't

|

||||

# keep retrying

|

||||

# email = f"FAILED TO GET CONFLUENCE EMAIL FOR {user_name}"

|

||||

_USER_EMAIL_CACHE[user_name] = email

|

||||

|

|

@ -912,7 +912,7 @@ def extract_text_from_confluence_html(

|

|||

confluence_object: dict[str, Any],

|

||||

fetched_titles: set[str],

|

||||

) -> str:

|

||||

"""Parse a Confluence html page and replace the 'user Id' by the real

|

||||

"""Parse a Confluence html page and replace the 'user id' by the real

|

||||

User Display Name

|

||||

|

||||

Args:

|

||||

|

|

|

|||

|

|

@ -76,7 +76,7 @@ ALL_ACCEPTED_FILE_EXTENSIONS = ACCEPTED_PLAIN_TEXT_FILE_EXTENSIONS + ACCEPTED_DO

|

|||

|

||||

MAX_RETRIEVER_EMAILS = 20

|

||||

CHUNK_SIZE_BUFFER = 64 # extra bytes past the limit to read

|

||||

# This is not a standard valid unicode char, it is used by the docs advanced API to

|

||||

# This is not a standard valid Unicode char, it is used by the docs advanced API to

|

||||

# represent smart chips (elements like dates and doc links).

|

||||

SMART_CHIP_CHAR = "\ue907"

|

||||

WEB_VIEW_LINK_KEY = "webViewLink"

|

||||

|

|

|

|||

|

|

@ -141,7 +141,7 @@ def crawl_folders_for_files(

|

|||

# Only mark a folder as done if it was fully traversed without errors

|

||||

# This usually indicates that the owner of the folder was impersonated.

|

||||

# In cases where this never happens, most likely the folder owner is

|

||||

# not part of the google workspace in question (or for oauth, the authenticated

|

||||

# not part of the Google Workspace in question (or for oauth, the authenticated

|

||||

# user doesn't own the folder)

|

||||

if found_files:

|

||||

update_traversed_ids_func(parent_id)

|

||||

|

|

@ -232,7 +232,7 @@ def get_files_in_shared_drive(

|

|||

**kwargs,

|

||||

):

|

||||

# If we found any files, mark this drive as traversed. When a user has access to a drive,

|

||||

# they have access to all the files in the drive. Also not a huge deal if we re-traverse

|

||||

# they have access to all the files in the drive. Also, not a huge deal if we re-traverse

|

||||

# empty drives.

|

||||

# NOTE: ^^ the above is not actually true due to folder restrictions:

|

||||

# https://support.google.com/a/users/answer/12380484?hl=en

|

||||

|

|

|

|||

|

|

@ -22,7 +22,7 @@ class GDriveMimeType(str, Enum):

|

|||

MARKDOWN = "text/markdown"

|

||||

|

||||

|

||||

# These correspond to The major stages of retrieval for google drive.

|

||||

# These correspond to The major stages of retrieval for Google Drive.

|

||||

# The stages for the oauth flow are:

|

||||

# get_all_files_for_oauth(),

|

||||

# get_all_drive_ids(),

|

||||

|

|

@ -117,7 +117,7 @@ class GoogleDriveCheckpoint(ConnectorCheckpoint):

|

|||

|

||||

class RetrievedDriveFile(BaseModel):

|

||||

"""

|

||||

Describes a file that has been retrieved from google drive.

|

||||

Describes a file that has been retrieved from Google Drive.

|

||||

user_email is the email of the user that the file was retrieved

|

||||

by impersonating. If an error worthy of being reported is encountered,

|

||||

error should be set and later propagated as a ConnectorFailure.

|

||||

|

|

|

|||

|

|

@ -29,8 +29,8 @@ class GmailService(Resource):

|

|||

|

||||

class RefreshableDriveObject:

|

||||

"""

|

||||

Running Google drive service retrieval functions

|

||||

involves accessing methods of the service object (ie. files().list())

|

||||

Running Google Drive service retrieval functions

|

||||

involves accessing methods of the service object (i.e. files().list())

|

||||

which can raise a RefreshError if the access token is expired.

|

||||

This class is a wrapper that propagates the ability to refresh the access token

|

||||

and retry the final retrieval function until execute() is called.

|

||||

|

|

|

|||

|

|

@ -120,7 +120,7 @@ def format_document_soup(

|

|||

# table is standard HTML element

|

||||

if e.name == "table":

|

||||

in_table = True

|

||||

# tr is for rows

|

||||

# TR is for rows

|

||||

elif e.name == "tr" and in_table:

|

||||

text += "\n"

|

||||

# td for data cell, th for header

|

||||

|

|

|

|||

|

|

@ -395,8 +395,7 @@ class AttachmentProcessingResult(BaseModel):

|

|||

|

||||

|

||||

class IndexingHeartbeatInterface(ABC):

|

||||

"""Defines a callback interface to be passed to

|

||||

to run_indexing_entrypoint."""

|

||||

"""Defines a callback interface to be passed to run_indexing_entrypoint."""

|

||||

|

||||

@abstractmethod

|

||||

def should_stop(self) -> bool:

|

||||

|

|

|

|||

|

|

@ -80,7 +80,7 @@ _TZ_OFFSET_PATTERN = re.compile(r"([+-])(\d{2})(:?)(\d{2})$")

|

|||

|

||||

|

||||

class JiraConnector(CheckpointedConnectorWithPermSync, SlimConnectorWithPermSync):

|

||||

"""Retrieve Jira issues and emit them as markdown documents."""

|

||||

"""Retrieve Jira issues and emit them as Markdown documents."""

|

||||

|

||||

def __init__(

|

||||

self,

|

||||

|

|

|

|||

|

|

@ -54,8 +54,8 @@ class ExternalAccess:

|

|||

A helper function that returns an *empty* set of external user-emails and group-ids, and sets `is_public` to `False`.

|

||||

This effectively makes the document in question "private" or inaccessible to anyone else.

|

||||

|

||||

This is especially helpful to use when you are performing permission-syncing, and some document's permissions aren't able

|

||||

to be determined (for whatever reason). Setting its `ExternalAccess` to "private" is a feasible fallback.

|

||||

This is especially helpful to use when you are performing permission-syncing, and some document's permissions can't

|

||||

be determined (for whatever reason). Setting its `ExternalAccess` to "private" is a feasible fallback.

|

||||

"""

|

||||

|

||||

return cls(

|

||||

|

|

|

|||

|

|

@ -24,7 +24,9 @@ logger = logging.getLogger(__name__)

|

|||

# Default knobs; keep conservative to avoid unexpected behavioural changes.

|

||||

DEFAULT_TIMEOUT = float(os.environ.get("HTTP_CLIENT_TIMEOUT", "15"))

|

||||

# Align with requests default: follow redirects with a max of 30 unless overridden.

|

||||

DEFAULT_FOLLOW_REDIRECTS = bool(int(os.environ.get("HTTP_CLIENT_FOLLOW_REDIRECTS", "1")))

|

||||

DEFAULT_FOLLOW_REDIRECTS = bool(

|

||||

int(os.environ.get("HTTP_CLIENT_FOLLOW_REDIRECTS", "1"))

|

||||

)

|

||||

DEFAULT_MAX_REDIRECTS = int(os.environ.get("HTTP_CLIENT_MAX_REDIRECTS", "30"))

|

||||

DEFAULT_MAX_RETRIES = int(os.environ.get("HTTP_CLIENT_MAX_RETRIES", "2"))

|

||||

DEFAULT_BACKOFF_FACTOR = float(os.environ.get("HTTP_CLIENT_BACKOFF_FACTOR", "0.5"))

|

||||

|

|

@ -32,7 +34,9 @@ DEFAULT_PROXY = os.environ.get("HTTP_CLIENT_PROXY")

|

|||

DEFAULT_USER_AGENT = os.environ.get("HTTP_CLIENT_USER_AGENT", "ragflow-http-client")

|

||||

|

||||

|

||||

def _clean_headers(headers: Optional[Dict[str, str]], auth_token: Optional[str] = None) -> Optional[Dict[str, str]]:

|

||||

def _clean_headers(

|

||||

headers: Optional[Dict[str, str]], auth_token: Optional[str] = None

|

||||

) -> Optional[Dict[str, str]]:

|

||||

merged_headers: Dict[str, str] = {}

|

||||

if DEFAULT_USER_AGENT:

|

||||

merged_headers["User-Agent"] = DEFAULT_USER_AGENT

|

||||

|

|

@ -59,39 +63,51 @@ async def async_request(

|

|||

auth_token: Optional[str] = None,

|

||||

retries: Optional[int] = None,

|

||||

backoff_factor: Optional[float] = None,

|

||||

proxies: Any = None,

|

||||

proxy: Any = None,

|

||||

**kwargs: Any,

|

||||

) -> httpx.Response:

|

||||

"""Lightweight async HTTP wrapper using httpx.AsyncClient with safe defaults."""

|

||||

timeout = timeout if timeout is not None else DEFAULT_TIMEOUT

|

||||

follow_redirects = DEFAULT_FOLLOW_REDIRECTS if follow_redirects is None else follow_redirects

|

||||

follow_redirects = (

|

||||

DEFAULT_FOLLOW_REDIRECTS if follow_redirects is None else follow_redirects

|

||||

)

|

||||

max_redirects = DEFAULT_MAX_REDIRECTS if max_redirects is None else max_redirects

|

||||

retries = DEFAULT_MAX_RETRIES if retries is None else max(retries, 0)

|

||||

backoff_factor = DEFAULT_BACKOFF_FACTOR if backoff_factor is None else backoff_factor

|

||||

backoff_factor = (

|

||||

DEFAULT_BACKOFF_FACTOR if backoff_factor is None else backoff_factor

|

||||

)

|

||||

headers = _clean_headers(headers, auth_token=auth_token)

|

||||

proxies = DEFAULT_PROXY if proxies is None else proxies

|

||||

proxy = DEFAULT_PROXY if proxy is None else proxy

|

||||

|

||||

async with httpx.AsyncClient(

|

||||

timeout=timeout,

|

||||

follow_redirects=follow_redirects,

|

||||

max_redirects=max_redirects,

|

||||

proxies=proxies,

|

||||

proxy=proxy,

|

||||

) as client:

|

||||

last_exc: Exception | None = None

|

||||

for attempt in range(retries + 1):

|

||||

try:

|

||||

start = time.monotonic()

|

||||

response = await client.request(method=method, url=url, headers=headers, **kwargs)

|

||||

response = await client.request(

|

||||

method=method, url=url, headers=headers, **kwargs

|

||||

)

|

||||

duration = time.monotonic() - start

|

||||

logger.debug(f"async_request {method} {url} -> {response.status_code} in {duration:.3f}s")

|

||||

logger.debug(

|

||||

f"async_request {method} {url} -> {response.status_code} in {duration:.3f}s"

|

||||

)

|

||||

return response

|

||||

except httpx.RequestError as exc:

|

||||

last_exc = exc

|

||||

if attempt >= retries:

|

||||

logger.warning(f"async_request exhausted retries for {method} {url}: {exc}")

|

||||

logger.warning(

|

||||

f"async_request exhausted retries for {method} {url}: {exc}"

|

||||

)

|

||||

raise

|

||||

delay = _get_delay(backoff_factor, attempt)

|

||||

logger.warning(f"async_request attempt {attempt + 1}/{retries + 1} failed for {method} {url}: {exc}; retrying in {delay:.2f}s")

|

||||

logger.warning(

|

||||

f"async_request attempt {attempt + 1}/{retries + 1} failed for {method} {url}: {exc}; retrying in {delay:.2f}s"

|

||||

)

|

||||

await asyncio.sleep(delay)

|

||||

raise last_exc # pragma: no cover

|

||||

|

||||

|

|

@ -107,39 +123,51 @@ def sync_request(

|

|||

auth_token: Optional[str] = None,

|

||||

retries: Optional[int] = None,

|

||||

backoff_factor: Optional[float] = None,

|

||||

proxies: Any = None,

|

||||

proxy: Any = None,

|

||||

**kwargs: Any,

|

||||

) -> httpx.Response:

|

||||

"""Synchronous counterpart to async_request, for CLI/tests or sync contexts."""

|

||||

timeout = timeout if timeout is not None else DEFAULT_TIMEOUT

|

||||

follow_redirects = DEFAULT_FOLLOW_REDIRECTS if follow_redirects is None else follow_redirects

|

||||

follow_redirects = (

|

||||

DEFAULT_FOLLOW_REDIRECTS if follow_redirects is None else follow_redirects

|

||||

)

|

||||

max_redirects = DEFAULT_MAX_REDIRECTS if max_redirects is None else max_redirects

|

||||

retries = DEFAULT_MAX_RETRIES if retries is None else max(retries, 0)

|

||||

backoff_factor = DEFAULT_BACKOFF_FACTOR if backoff_factor is None else backoff_factor

|

||||

backoff_factor = (

|

||||

DEFAULT_BACKOFF_FACTOR if backoff_factor is None else backoff_factor

|

||||

)

|

||||

headers = _clean_headers(headers, auth_token=auth_token)

|

||||

proxies = DEFAULT_PROXY if proxies is None else proxies

|

||||

proxy = DEFAULT_PROXY if proxy is None else proxy

|

||||

|

||||

with httpx.Client(

|

||||

timeout=timeout,

|

||||

follow_redirects=follow_redirects,

|

||||

max_redirects=max_redirects,

|

||||

proxies=proxies,

|

||||

proxy=proxy,

|

||||

) as client:

|

||||

last_exc: Exception | None = None

|

||||

for attempt in range(retries + 1):

|

||||

try:

|

||||

start = time.monotonic()

|

||||

response = client.request(method=method, url=url, headers=headers, **kwargs)

|

||||

response = client.request(

|

||||

method=method, url=url, headers=headers, **kwargs

|

||||

)

|

||||

duration = time.monotonic() - start

|

||||

logger.debug(f"sync_request {method} {url} -> {response.status_code} in {duration:.3f}s")

|

||||

logger.debug(

|

||||

f"sync_request {method} {url} -> {response.status_code} in {duration:.3f}s"

|

||||

)

|

||||

return response

|

||||

except httpx.RequestError as exc:

|

||||

last_exc = exc

|

||||

if attempt >= retries:

|

||||

logger.warning(f"sync_request exhausted retries for {method} {url}: {exc}")

|

||||

logger.warning(

|

||||

f"sync_request exhausted retries for {method} {url}: {exc}"

|

||||

)

|

||||

raise

|

||||

delay = _get_delay(backoff_factor, attempt)

|

||||

logger.warning(f"sync_request attempt {attempt + 1}/{retries + 1} failed for {method} {url}: {exc}; retrying in {delay:.2f}s")

|

||||

logger.warning(

|

||||

f"sync_request attempt {attempt + 1}/{retries + 1} failed for {method} {url}: {exc}; retrying in {delay:.2f}s"

|

||||

)

|

||||

time.sleep(delay)

|

||||

raise last_exc # pragma: no cover

|

||||

|

||||

|

|

|

|||

|

|

@ -210,7 +210,10 @@ def init_settings():

|

|||

IMAGE2TEXT_CFG = _resolve_per_model_config(image2text_entry, LLM_FACTORY, API_KEY, LLM_BASE_URL)

|

||||

|

||||

CHAT_MDL = CHAT_CFG.get("model", "") or ""

|

||||

EMBEDDING_MDL = os.getenv("TEI_MODEL", "BAAI/bge-small-en-v1.5") if "tei-" in os.getenv("COMPOSE_PROFILES", "") else ""

|

||||

EMBEDDING_MDL = EMBEDDING_CFG.get("model", "") or ""

|

||||

compose_profiles = os.getenv("COMPOSE_PROFILES", "")

|

||||

if "tei-" in compose_profiles:

|

||||

EMBEDDING_MDL = os.getenv("TEI_MODEL", EMBEDDING_MDL or "BAAI/bge-small-en-v1.5")

|

||||

RERANK_MDL = RERANK_CFG.get("model", "") or ""

|

||||

ASR_MDL = ASR_CFG.get("model", "") or ""

|

||||

IMAGE2TEXT_MDL = IMAGE2TEXT_CFG.get("model", "") or ""

|

||||

|

|

|

|||

|

|

@ -61,7 +61,7 @@ def clean_markdown_block(text):

|

|||

str: Cleaned text with Markdown code block syntax removed, and stripped of surrounding whitespace

|

||||

|

||||

"""

|

||||

# Remove opening ```markdown tag with optional whitespace and newlines

|

||||

# Remove opening ```Markdown tag with optional whitespace and newlines

|

||||

# Matches: optional whitespace + ```markdown + optional whitespace + optional newline

|

||||

text = re.sub(r'^\s*```markdown\s*\n?', '', text)

|

||||

|

||||

|

|

|

|||

|

|

@ -2,6 +2,7 @@

|

|||

"id": {"type": "varchar", "default": ""},

|

||||

"doc_id": {"type": "varchar", "default": ""},

|

||||

"kb_id": {"type": "varchar", "default": ""},

|

||||

"mom_id": {"type": "varchar", "default": ""},

|

||||

"create_time": {"type": "varchar", "default": ""},

|

||||

"create_timestamp_flt": {"type": "float", "default": 0.0},

|

||||

"img_id": {"type": "varchar", "default": ""},

|

||||

|

|

|

|||

|

|

@ -51,7 +51,7 @@ We use vision information to resolve problems as human being.

|

|||

```bash

|

||||

python deepdoc/vision/t_ocr.py --inputs=path_to_images_or_pdfs --output_dir=path_to_store_result

|

||||

```

|

||||

The inputs could be directory to images or PDF, or a image or PDF.

|

||||

The inputs could be directory to images or PDF, or an image or PDF.

|

||||

You can look into the folder 'path_to_store_result' where has images which demonstrate the positions of results,

|

||||

txt files which contain the OCR text.

|

||||

<div align="center" style="margin-top:20px;margin-bottom:20px;">

|

||||

|

|

@ -78,7 +78,7 @@ We use vision information to resolve problems as human being.

|

|||

```bash

|

||||

python deepdoc/vision/t_recognizer.py --inputs=path_to_images_or_pdfs --threshold=0.2 --mode=layout --output_dir=path_to_store_result

|

||||

```

|

||||

The inputs could be directory to images or PDF, or a image or PDF.

|

||||

The inputs could be directory to images or PDF, or an image or PDF.

|

||||

You can look into the folder 'path_to_store_result' where has images which demonstrate the detection results as following:

|

||||

<div align="center" style="margin-top:20px;margin-bottom:20px;">

|

||||

<img src="https://github.com/infiniflow/ragflow/assets/12318111/07e0f625-9b28-43d0-9fbb-5bf586cd286f" width="1000"/>

|

||||

|

|

|

|||

|

|

@ -41,7 +41,7 @@ class RAGFlowExcelParser:

|

|||

|

||||

try:

|

||||

file_like_object.seek(0)

|

||||

df = pd.read_csv(file_like_object)

|

||||

df = pd.read_csv(file_like_object, on_bad_lines='skip')

|

||||

return RAGFlowExcelParser._dataframe_to_workbook(df)

|

||||

|

||||

except Exception as e_csv:

|

||||

|

|

@ -164,7 +164,7 @@ class RAGFlowExcelParser:

|

|||

except Exception as e:

|

||||

logging.warning(f"Parse spreadsheet error: {e}, trying to interpret as CSV file")

|

||||

file_like_object.seek(0)

|

||||

df = pd.read_csv(file_like_object)

|

||||

df = pd.read_csv(file_like_object, on_bad_lines='skip')

|

||||

df = df.replace(r"^\s*$", "", regex=True)

|

||||

return df.to_markdown(index=False)

|

||||

|

||||

|

|

|

|||

|

|

@ -155,7 +155,7 @@ class TableStructureRecognizer(Recognizer):

|

|||

while i < len(boxes):

|

||||

if TableStructureRecognizer.is_caption(boxes[i]):

|

||||

if is_english:

|

||||

cap + " "

|

||||

cap += " "

|

||||

cap += boxes[i]["text"]

|

||||

boxes.pop(i)

|

||||

i -= 1

|

||||

|

|

|

|||

|

|

@ -26,8 +26,8 @@ services:

|

|||

# - --no-json-response # Disable JSON response mode in Streamable HTTP transport (instead of SSE over HTTP)

|

||||

|

||||

# Example configuration to start Admin server:

|

||||

# command:

|

||||

# - --enable-adminserver

|

||||

command:

|

||||

- --enable-adminserver

|

||||

ports:

|

||||

- ${SVR_WEB_HTTP_PORT}:80

|

||||

- ${SVR_WEB_HTTPS_PORT}:443

|

||||

|

|

@ -75,8 +75,8 @@ services:

|

|||

# - --no-json-response # Disable JSON response mode in Streamable HTTP transport (instead of SSE over HTTP)

|

||||

|

||||

# Example configuration to start Admin server:

|

||||

# command:

|

||||

# - --enable-adminserver

|

||||

command:

|

||||

- --enable-adminserver

|

||||

ports:

|

||||

- ${SVR_WEB_HTTP_PORT}:80

|

||||

- ${SVR_WEB_HTTPS_PORT}:443

|

||||

|

|

|

|||

|

|

@ -4013,7 +4013,7 @@ Failure:

|

|||

|

||||

**DELETE** `/api/v1/agents/{agent_id}/sessions`

|

||||

|

||||

Deletes sessions of a agent by ID.

|

||||

Deletes sessions of an agent by ID.

|

||||

|

||||

#### Request

|

||||

|

||||

|

|

@ -4072,7 +4072,7 @@ Failure:

|

|||

|

||||

Generates five to ten alternative question strings from the user's original query to retrieve more relevant search results.

|

||||

|

||||

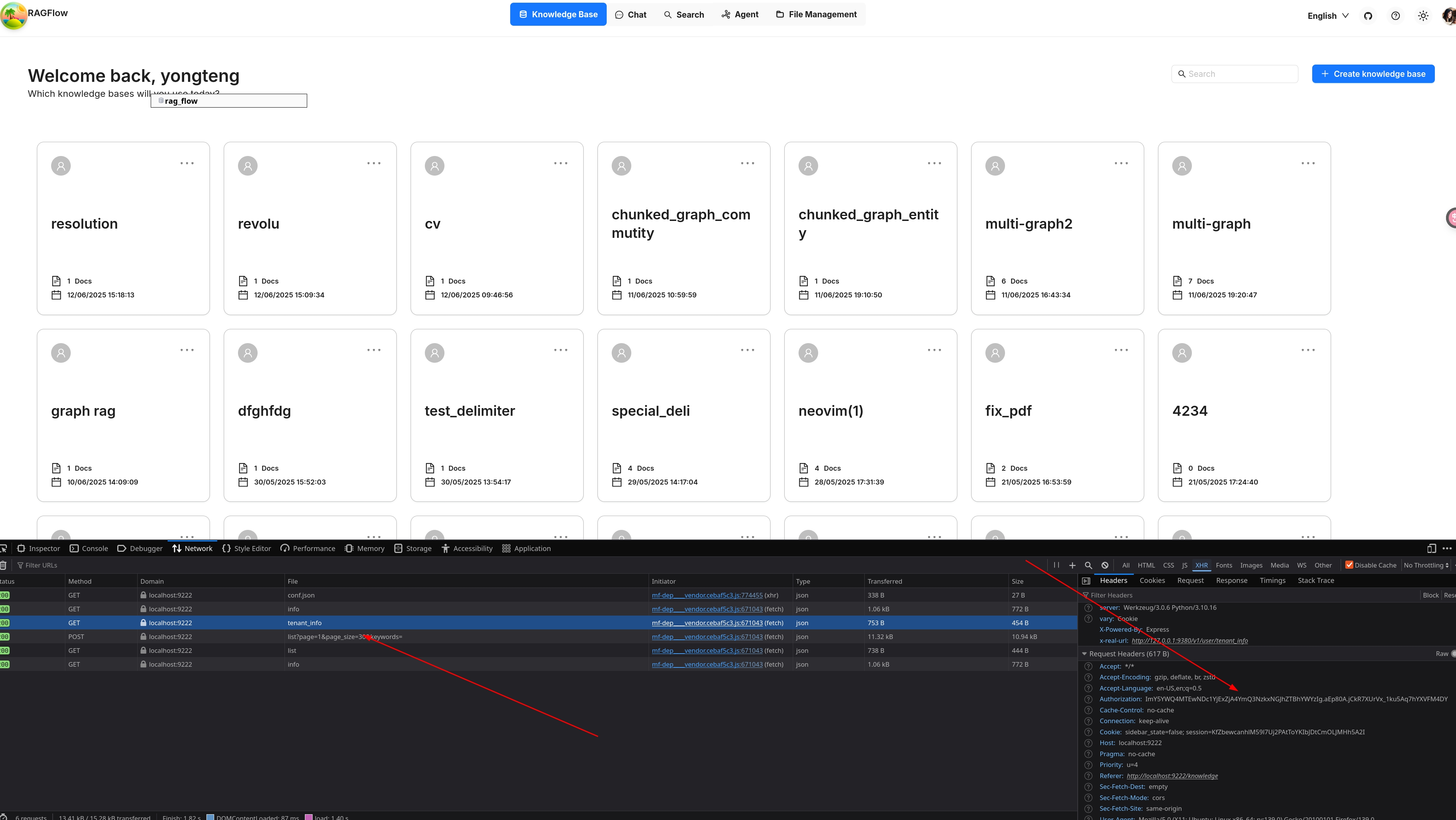

This operation requires a `Bearer Login Token`, which typically expires with in 24 hours. You can find the it in the Request Headers in your browser easily as shown below:

|

||||

This operation requires a `Bearer Login Token`, which typically expires with in 24 hours. You can find it in the Request Headers in your browser easily as shown below:

|

||||

|

||||

|

||||

|

||||

|

|

|

|||

|

|

@ -1740,7 +1740,7 @@ for session in sessions:

|

|||

Agent.delete_sessions(ids: list[str] = None)

|

||||

```

|

||||

|

||||

Deletes sessions of a agent by ID.

|

||||

Deletes sessions of an agent by ID.

|

||||

|

||||

#### Parameters

|

||||

|

||||

|

|

|

|||

|

|

@ -92,6 +92,6 @@ def get_metadata(cls) -> LLMToolMetadata:

|

|||

|

||||

The `get_metadata` method is a `classmethod`. It will provide the description of this tool to LLM.

|

||||

|

||||

The fields starts with `display` can use a special notation: `$t:xxx`, which will use the i18n mechanism in the RAGFlow frontend, getting text from the `llmTools` category. The frontend will display what you put here if you don't use this notation.

|

||||

The fields start with `display` can use a special notation: `$t:xxx`, which will use the i18n mechanism in the RAGFlow frontend, getting text from the `llmTools` category. The frontend will display what you put here if you don't use this notation.

|

||||

|

||||

Now our tool is ready. You can select it in the `Generate` component and try it out.

|

||||

|

|

|

|||

|

|

@ -5,7 +5,7 @@ from plugin.llm_tool_plugin import LLMToolMetadata, LLMToolPlugin

|

|||

class BadCalculatorPlugin(LLMToolPlugin):

|

||||

"""

|

||||

A sample LLM tool plugin, will add two numbers with 100.

|

||||

It only present for demo purpose. Do not use it in production.

|

||||

It only presents for demo purpose. Do not use it in production.

|

||||

"""

|

||||

_version_ = "1.0.0"

|

||||

|

||||

|

|

|

|||

|

|

@ -70,7 +70,7 @@ def chunk(filename, binary=None, from_page=0, to_page=100000,

|

|||

"""

|

||||

Supported file formats are docx, pdf, txt.

|

||||

Since a book is long and not all the parts are useful, if it's a PDF,

|

||||

please setup the page ranges for every book in order eliminate negative effects and save elapsed computing time.

|

||||

please set up the page ranges for every book in order eliminate negative effects and save elapsed computing time.

|

||||

"""

|

||||

parser_config = kwargs.get(

|

||||

"parser_config", {

|

||||

|

|

|

|||

|

|

@ -201,12 +201,23 @@ def chunk(filename, binary=None, from_page=0, to_page=100000,

|

|||

|

||||

elif re.search(r"\.doc$", filename, re.IGNORECASE):

|

||||

callback(0.1, "Start to parse.")

|

||||

binary = BytesIO(binary)

|

||||

doc_parsed = parser.from_buffer(binary)

|

||||

sections = doc_parsed['content'].split('\n')

|

||||

sections = [s for s in sections if s]

|

||||

callback(0.8, "Finish parsing.")

|

||||

try:

|

||||

from tika import parser as tika_parser

|

||||

except Exception as e:

|

||||

callback(0.8, f"tika not available: {e}. Unsupported .doc parsing.")

|

||||

logging.warning(f"tika not available: {e}. Unsupported .doc parsing for {filename}.")

|

||||

return []

|

||||

|

||||

binary = BytesIO(binary)

|

||||

doc_parsed = tika_parser.from_buffer(binary)

|

||||

if doc_parsed.get('content', None) is not None:

|

||||

sections = doc_parsed['content'].split('\n')

|

||||

sections = [s for s in sections if s]

|

||||

callback(0.8, "Finish parsing.")

|

||||

else:

|

||||

callback(0.8, f"tika.parser got empty content from {filename}.")

|

||||

logging.warning(f"tika.parser got empty content from {filename}.")

|

||||

return []

|

||||

else:

|

||||

raise NotImplementedError(

|

||||

"file type not supported yet(doc, docx, pdf, txt supported)")

|

||||

|

|

|

|||

|

|

@ -14,6 +14,7 @@

|

|||

# limitations under the License.

|

||||

#

|

||||

|

||||

import asyncio

|

||||

import io

|

||||

import re

|

||||

|

||||

|

|

@ -50,7 +51,7 @@ def chunk(filename, binary, tenant_id, lang, callback=None, **kwargs):

|

|||

}

|

||||

)

|

||||

cv_mdl = LLMBundle(tenant_id, llm_type=LLMType.IMAGE2TEXT, lang=lang)

|

||||

ans = cv_mdl.chat(system="", history=[], gen_conf={}, video_bytes=binary, filename=filename)

|

||||

ans = asyncio.run(cv_mdl.async_chat(system="", history=[], gen_conf={}, video_bytes=binary, filename=filename))

|

||||

callback(0.8, "CV LLM respond: %s ..." % ans[:32])

|

||||

ans += "\n" + ans

|

||||

tokenize(doc, ans, eng)

|

||||

|

|

|

|||

|

|

@ -313,7 +313,7 @@ def mdQuestionLevel(s):

|

|||

def chunk(filename, binary=None, from_page=0, to_page=100000, lang="Chinese", callback=None, **kwargs):

|

||||

"""

|

||||

Excel and csv(txt) format files are supported.

|

||||

If the file is in excel format, there should be 2 column question and answer without header.

|

||||

If the file is in Excel format, there should be 2 column question and answer without header.

|

||||

And question column is ahead of answer column.

|

||||

And it's O.K if it has multiple sheets as long as the columns are rightly composed.

|

||||

|

||||

|

|

|

|||

|

|

@ -37,7 +37,7 @@ def beAdoc(d, q, a, eng, row_num=-1):

|

|||

def chunk(filename, binary=None, lang="Chinese", callback=None, **kwargs):

|

||||

"""

|

||||

Excel and csv(txt) format files are supported.

|

||||

If the file is in excel format, there should be 2 column content and tags without header.

|

||||

If the file is in Excel format, there should be 2 column content and tags without header.

|

||||

And content column is ahead of tags column.

|

||||

And it's O.K if it has multiple sheets as long as the columns are rightly composed.

|

||||

|

||||

|

|

|

|||

|

|

@ -12,6 +12,7 @@

|

|||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

import asyncio

|

||||

import io

|

||||

import json

|

||||

import os

|

||||

|

|

@ -634,7 +635,7 @@ class Parser(ProcessBase):

|

|||

self.set_output("output_format", conf["output_format"])

|

||||

|

||||

cv_mdl = LLMBundle(self._canvas.get_tenant_id(), LLMType.IMAGE2TEXT, llm_name=conf["llm_id"])

|

||||

txt = cv_mdl.chat(system="", history=[], gen_conf={}, video_bytes=blob, filename=name)

|

||||

txt = asyncio.run(cv_mdl.async_chat(system="", history=[], gen_conf={}, video_bytes=blob, filename=name))

|

||||

|

||||

self.set_output("text", txt)

|

||||

|

||||

|

|

|

|||

|

|

@ -28,7 +28,7 @@ import json_repair

|

|||

import litellm

|

||||

import openai

|

||||

from openai import AsyncOpenAI, OpenAI

|

||||

from openai.lib.azure import AzureOpenAI

|

||||

from openai.lib.azure import AzureOpenAI, AsyncAzureOpenAI

|

||||

from strenum import StrEnum

|

||||

|

||||

from common.token_utils import num_tokens_from_string, total_token_count_from_response

|

||||

|

|

@ -535,6 +535,7 @@ class AzureChat(Base):

|

|||

api_version = json.loads(key).get("api_version", "2024-02-01")

|

||||

super().__init__(key, model_name, base_url, **kwargs)

|

||||

self.client = AzureOpenAI(api_key=api_key, azure_endpoint=base_url, api_version=api_version)

|

||||

self.async_client = AsyncAzureOpenAI(api_key=key, base_url=base_url, api_version=api_version)

|

||||

self.model_name = model_name

|

||||

|

||||

@property

|

||||

|

|

|

|||

|

|

@ -14,6 +14,7 @@

|

|||

# limitations under the License.

|

||||

#

|

||||

|

||||

import asyncio

|

||||

import base64

|

||||

import json

|

||||

import logging

|

||||

|

|

@ -27,9 +28,8 @@ from pathlib import Path

|

|||

from urllib.parse import urljoin

|

||||

|

||||

import requests

|

||||

from openai import OpenAI

|

||||

from openai.lib.azure import AzureOpenAI

|

||||

from zhipuai import ZhipuAI

|

||||

from openai import OpenAI, AsyncOpenAI

|

||||

from openai.lib.azure import AzureOpenAI, AsyncAzureOpenAI

|

||||

|

||||

from common.token_utils import num_tokens_from_string, total_token_count_from_response

|

||||

from rag.nlp import is_english

|

||||

|

|

@ -76,9 +76,9 @@ class Base(ABC):

|

|||

pmpt.append({"type": "image_url", "image_url": {"url": img if isinstance(img, str) and img.startswith("data:") else f"data:image/png;base64,{img}"}})

|

||||

return pmpt

|

||||

|

||||

def chat(self, system, history, gen_conf, images=None, **kwargs):

|

||||

async def async_chat(self, system, history, gen_conf, images=None, **kwargs):

|

||||

try:

|

||||

response = self.client.chat.completions.create(

|

||||

response = await self.async_client.chat.completions.create(

|

||||

model=self.model_name,

|

||||

messages=self._form_history(system, history, images),

|

||||

extra_body=self.extra_body,

|

||||

|

|

@ -87,17 +87,17 @@ class Base(ABC):

|

|||

except Exception as e:

|

||||

return "**ERROR**: " + str(e), 0

|

||||

|

||||

def chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

async def async_chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

ans = ""

|

||||

tk_count = 0

|

||||

try:

|

||||

response = self.client.chat.completions.create(

|

||||

response = await self.async_client.chat.completions.create(

|

||||

model=self.model_name,

|

||||

messages=self._form_history(system, history, images),

|

||||

stream=True,

|

||||

extra_body=self.extra_body,

|

||||

)

|

||||

for resp in response:

|

||||

async for resp in response:

|

||||

if not resp.choices[0].delta.content:

|

||||

continue

|

||||

delta = resp.choices[0].delta.content

|

||||

|

|

@ -191,6 +191,7 @@ class GptV4(Base):

|

|||

base_url = "https://api.openai.com/v1"

|

||||

self.api_key = key

|

||||

self.client = OpenAI(api_key=key, base_url=base_url)

|

||||

self.async_client = AsyncOpenAI(api_key=key, base_url=base_url)

|

||||

self.model_name = model_name

|

||||

self.lang = lang

|

||||

super().__init__(**kwargs)

|

||||

|

|

@ -221,6 +222,7 @@ class AzureGptV4(GptV4):

|

|||

api_key = json.loads(key).get("api_key", "")

|

||||

api_version = json.loads(key).get("api_version", "2024-02-01")

|

||||

self.client = AzureOpenAI(api_key=api_key, azure_endpoint=kwargs["base_url"], api_version=api_version)

|

||||

self.async_client = AsyncAzureOpenAI(api_key=api_key, azure_endpoint=kwargs["base_url"], api_version=api_version)

|

||||

self.model_name = model_name

|

||||

self.lang = lang

|

||||

Base.__init__(self, **kwargs)

|

||||

|

|

@ -243,7 +245,7 @@ class QWenCV(GptV4):

|

|||

base_url = "https://dashscope.aliyuncs.com/compatible-mode/v1"

|

||||

super().__init__(key, model_name, lang=lang, base_url=base_url, **kwargs)

|

||||

|

||||

def chat(self, system, history, gen_conf, images=None, video_bytes=None, filename="", **kwargs):

|

||||

async def async_chat(self, system, history, gen_conf, images=None, video_bytes=None, filename="", **kwargs):

|

||||

if video_bytes:

|

||||

try:

|

||||

summary, summary_num_tokens = self._process_video(video_bytes, filename)

|

||||

|

|

@ -313,7 +315,8 @@ class Zhipu4V(GptV4):

|

|||

_FACTORY_NAME = "ZHIPU-AI"

|

||||

|

||||

def __init__(self, key, model_name="glm-4v", lang="Chinese", **kwargs):

|

||||

self.client = ZhipuAI(api_key=key)

|

||||

self.client = OpenAI(api_key=key, base_url="https://open.bigmodel.cn/api/paas/v4/")

|

||||

self.async_client = AsyncOpenAI(api_key=key, base_url="https://open.bigmodel.cn/api/paas/v4/")

|

||||

self.model_name = model_name

|

||||

self.lang = lang

|

||||

Base.__init__(self, **kwargs)

|

||||

|

|

@ -342,20 +345,20 @@ class Zhipu4V(GptV4):

|

|||

)

|

||||

return response.json()

|

||||

|

||||

def chat(self, system, history, gen_conf, images=None, stream=False, **kwargs):

|

||||

async def async_chat(self, system, history, gen_conf, images=None, **kwargs):

|

||||

if system and history and history[0].get("role") != "system":

|

||||

history.insert(0, {"role": "system", "content": system})

|

||||

|

||||

gen_conf = self._clean_conf(gen_conf)

|

||||

|

||||

logging.info(json.dumps(history, ensure_ascii=False, indent=2))

|

||||

response = self.client.chat.completions.create(model=self.model_name, messages=self._form_history(system, history, images), stream=False, **gen_conf)

|

||||

response = await self.async_client.chat.completions.create(model=self.model_name, messages=self._form_history(system, history, images), stream=False, **gen_conf)

|

||||

content = response.choices[0].message.content.strip()

|

||||

|

||||

cleaned = re.sub(r"<\|(begin_of_box|end_of_box)\|>", "", content).strip()

|

||||

return cleaned, total_token_count_from_response(response)

|

||||

|

||||

def chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

async def async_chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

from rag.llm.chat_model import LENGTH_NOTIFICATION_CN, LENGTH_NOTIFICATION_EN

|

||||

from rag.nlp import is_chinese

|

||||

|

||||

|

|

@ -366,8 +369,8 @@ class Zhipu4V(GptV4):

|

|||

tk_count = 0

|

||||

try:

|

||||

logging.info(json.dumps(history, ensure_ascii=False, indent=2))

|

||||

response = self.client.chat.completions.create(model=self.model_name, messages=self._form_history(system, history, images), stream=True, **gen_conf)

|

||||

for resp in response:

|

||||

response = await self.async_client.chat.completions.create(model=self.model_name, messages=self._form_history(system, history, images), stream=True, **gen_conf)

|

||||

async for resp in response:

|

||||

if not resp.choices[0].delta.content:

|

||||

continue

|

||||

delta = resp.choices[0].delta.content

|

||||

|

|

@ -412,6 +415,7 @@ class StepFunCV(GptV4):

|

|||

if not base_url:

|

||||

base_url = "https://api.stepfun.com/v1"

|

||||

self.client = OpenAI(api_key=key, base_url=base_url)

|

||||

self.async_client = AsyncOpenAI(api_key=key, base_url=base_url)

|

||||

self.model_name = model_name

|

||||

self.lang = lang

|

||||

Base.__init__(self, **kwargs)

|

||||

|

|

@ -425,6 +429,7 @@ class VolcEngineCV(GptV4):

|

|||

base_url = "https://ark.cn-beijing.volces.com/api/v3"

|

||||

ark_api_key = json.loads(key).get("ark_api_key", "")

|

||||

self.client = OpenAI(api_key=ark_api_key, base_url=base_url)

|

||||

self.async_client = AsyncOpenAI(api_key=ark_api_key, base_url=base_url)

|

||||

self.model_name = json.loads(key).get("ep_id", "") + json.loads(key).get("endpoint_id", "")

|

||||

self.lang = lang

|

||||

Base.__init__(self, **kwargs)

|

||||

|

|

@ -438,6 +443,7 @@ class LmStudioCV(GptV4):

|

|||

raise ValueError("Local llm url cannot be None")

|

||||

base_url = urljoin(base_url, "v1")

|

||||

self.client = OpenAI(api_key="lm-studio", base_url=base_url)

|

||||

self.async_client = AsyncOpenAI(api_key="lm-studio", base_url=base_url)

|

||||

self.model_name = model_name

|

||||

self.lang = lang

|

||||

Base.__init__(self, **kwargs)

|

||||

|

|

@ -451,6 +457,7 @@ class OpenAI_APICV(GptV4):

|

|||

raise ValueError("url cannot be None")

|

||||

base_url = urljoin(base_url, "v1")

|

||||

self.client = OpenAI(api_key=key, base_url=base_url)

|

||||

self.async_client = AsyncOpenAI(api_key=key, base_url=base_url)

|

||||

self.model_name = model_name.split("___")[0]

|

||||

self.lang = lang

|

||||

Base.__init__(self, **kwargs)

|

||||

|

|

@ -491,6 +498,7 @@ class OpenRouterCV(GptV4):

|

|||

base_url = "https://openrouter.ai/api/v1"

|

||||

api_key = json.loads(key).get("api_key", "")

|

||||

self.client = OpenAI(api_key=api_key, base_url=base_url)

|

||||

self.async_client = AsyncOpenAI(api_key=api_key, base_url=base_url)

|

||||

self.model_name = model_name

|

||||

self.lang = lang

|

||||

Base.__init__(self, **kwargs)

|

||||

|

|

@ -522,6 +530,7 @@ class LocalAICV(GptV4):

|

|||

raise ValueError("Local cv model url cannot be None")

|

||||

base_url = urljoin(base_url, "v1")

|

||||

self.client = OpenAI(api_key="empty", base_url=base_url)

|

||||

self.async_client = AsyncOpenAI(api_key="empty", base_url=base_url)

|

||||

self.model_name = model_name.split("___")[0]

|

||||

self.lang = lang

|

||||

Base.__init__(self, **kwargs)

|

||||

|

|

@ -533,6 +542,7 @@ class XinferenceCV(GptV4):

|

|||

def __init__(self, key, model_name="", lang="Chinese", base_url="", **kwargs):

|

||||

base_url = urljoin(base_url, "v1")

|

||||

self.client = OpenAI(api_key=key, base_url=base_url)

|

||||

self.async_client = AsyncOpenAI(api_key=key, base_url=base_url)

|

||||

self.model_name = model_name

|

||||

self.lang = lang

|

||||

Base.__init__(self, **kwargs)

|

||||

|

|

@ -546,6 +556,7 @@ class GPUStackCV(GptV4):

|

|||

raise ValueError("Local llm url cannot be None")

|

||||

base_url = urljoin(base_url, "v1")

|

||||

self.client = OpenAI(api_key=key, base_url=base_url)

|

||||

self.async_client = AsyncOpenAI(api_key=key, base_url=base_url)

|

||||

self.model_name = model_name

|

||||

self.lang = lang

|

||||

Base.__init__(self, **kwargs)

|

||||

|

|

@ -635,19 +646,19 @@ class OllamaCV(Base):

|

|||

except Exception as e:

|

||||

return "**ERROR**: " + str(e), 0

|

||||

|

||||

def chat(self, system, history, gen_conf, images=None, **kwargs):

|

||||

async def async_chat(self, system, history, gen_conf, images=None, **kwargs):

|

||||

try:

|

||||

response = self.client.chat(model=self.model_name, messages=self._form_history(system, history, images), options=self._clean_conf(gen_conf), keep_alive=self.keep_alive)

|

||||

response = await asyncio.to_thread(self.client.chat, model=self.model_name, messages=self._form_history(system, history, images), options=self._clean_conf(gen_conf), keep_alive=self.keep_alive)

|

||||

|

||||

ans = response["message"]["content"].strip()

|

||||

return ans, response["eval_count"] + response.get("prompt_eval_count", 0)

|

||||

except Exception as e:

|

||||

return "**ERROR**: " + str(e), 0

|

||||

|

||||

def chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

async def async_chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

ans = ""

|

||||

try:

|

||||

response = self.client.chat(model=self.model_name, messages=self._form_history(system, history, images), stream=True, options=self._clean_conf(gen_conf), keep_alive=self.keep_alive)

|

||||

response = await asyncio.to_thread(self.client.chat, model=self.model_name, messages=self._form_history(system, history, images), stream=True, options=self._clean_conf(gen_conf), keep_alive=self.keep_alive)

|

||||

for resp in response:

|

||||

if resp["done"]:

|

||||

yield resp.get("prompt_eval_count", 0) + resp.get("eval_count", 0)

|

||||

|

|

@ -780,41 +791,41 @@ class GeminiCV(Base):

|

|||

)

|

||||

return res.text, total_token_count_from_response(res)

|

||||

|

||||

def chat(self, system, history, gen_conf, images=None, video_bytes=None, filename="", **kwargs):

|

||||

async def async_chat(self, system, history, gen_conf, images=None, video_bytes=None, filename="", **kwargs):

|

||||

if video_bytes:

|

||||

try:

|

||||

size = len(video_bytes) if video_bytes else 0

|

||||

logging.info(f"[GeminiCV] chat called with video: filename={filename} size={size}")

|

||||

summary, summary_num_tokens = self._process_video(video_bytes, filename)

|

||||

logging.info(f"[GeminiCV] async_chat called with video: filename={filename} size={size}")

|

||||

summary, summary_num_tokens = await asyncio.to_thread(self._process_video, video_bytes, filename)

|

||||

return summary, summary_num_tokens

|

||||

except Exception as e:

|

||||

logging.info(f"[GeminiCV] chat video error: {e}")

|

||||

logging.info(f"[GeminiCV] async_chat video error: {e}")

|

||||

return "**ERROR**: " + str(e), 0

|

||||

|

||||

from google.genai import types

|

||||

|

||||

history_len = len(history) if history else 0

|

||||

images_len = len(images) if images else 0

|

||||

logging.info(f"[GeminiCV] chat called: history_len={history_len} images_len={images_len} gen_conf={gen_conf}")

|

||||

logging.info(f"[GeminiCV] async_chat called: history_len={history_len} images_len={images_len} gen_conf={gen_conf}")

|

||||

|

||||

generation_config = types.GenerateContentConfig(

|

||||

temperature=gen_conf.get("temperature", 0.3),

|

||||

top_p=gen_conf.get("top_p", 0.7),

|

||||

)

|

||||

try:

|

||||

response = self.client.models.generate_content(

|

||||

response = await self.client.aio.models.generate_content(

|

||||

model=self.model_name,

|

||||

contents=self._form_history(system, history, images),

|

||||

config=generation_config,

|

||||

)

|

||||

ans = response.text

|

||||

logging.info("[GeminiCV] chat completed")

|

||||

logging.info("[GeminiCV] async_chat completed")

|

||||

return ans, total_token_count_from_response(response)

|

||||

except Exception as e:

|

||||

logging.warning(f"[GeminiCV] chat error: {e}")

|

||||

logging.warning(f"[GeminiCV] async_chat error: {e}")

|

||||

return "**ERROR**: " + str(e), 0

|

||||

|

||||

def chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

async def async_chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

ans = ""

|

||||

response = None

|

||||

try:

|

||||

|

|

@ -826,15 +837,15 @@ class GeminiCV(Base):

|

|||

)

|

||||

history_len = len(history) if history else 0

|

||||

images_len = len(images) if images else 0

|

||||

logging.info(f"[GeminiCV] chat_streamly called: history_len={history_len} images_len={images_len} gen_conf={gen_conf}")

|

||||

logging.info(f"[GeminiCV] async_chat_streamly called: history_len={history_len} images_len={images_len} gen_conf={gen_conf}")

|

||||

|

||||

response_stream = self.client.models.generate_content_stream(

|

||||

response_stream = await self.client.aio.models.generate_content_stream(

|

||||

model=self.model_name,

|

||||

contents=self._form_history(system, history, images),

|

||||

config=generation_config,

|

||||

)

|

||||

|

||||

for chunk in response_stream:

|

||||

async for chunk in response_stream:

|

||||

if chunk.text:

|

||||

ans += chunk.text

|

||||

yield chunk.text

|

||||

|

|

@ -939,17 +950,17 @@ class NvidiaCV(Base):

|

|||

response = self._request(vision_prompt)

|

||||

return (response["choices"][0]["message"]["content"].strip(), total_token_count_from_response(response))

|

||||

|

||||

def chat(self, system, history, gen_conf, images=None, **kwargs):

|

||||

async def async_chat(self, system, history, gen_conf, images=None, **kwargs):

|

||||

try:

|

||||

response = self._request(self._form_history(system, history, images), gen_conf)

|

||||

response = await asyncio.to_thread(self._request, self._form_history(system, history, images), gen_conf)

|

||||

return (response["choices"][0]["message"]["content"].strip(), total_token_count_from_response(response))

|

||||

except Exception as e:

|

||||

return "**ERROR**: " + str(e), 0

|

||||

|

||||

def chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

async def async_chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

total_tokens = 0

|

||||

try:

|

||||

response = self._request(self._form_history(system, history, images), gen_conf)

|

||||

response = await asyncio.to_thread(self._request, self._form_history(system, history, images), gen_conf)

|

||||

cnt = response["choices"][0]["message"]["content"]

|

||||

total_tokens += total_token_count_from_response(response)

|

||||

for resp in cnt:

|

||||

|

|

@ -967,6 +978,7 @@ class AnthropicCV(Base):

|

|||

import anthropic

|

||||

|

||||

self.client = anthropic.Anthropic(api_key=key)

|

||||

self.async_client = anthropic.AsyncAnthropic(api_key=key)

|

||||

self.model_name = model_name

|

||||

self.system = ""

|

||||

self.max_tokens = 8192

|

||||

|

|

@ -1012,17 +1024,18 @@ class AnthropicCV(Base):

|

|||

gen_conf["max_tokens"] = self.max_tokens

|

||||

return gen_conf

|

||||

|

||||

def chat(self, system, history, gen_conf, images=None, **kwargs):

|

||||

async def async_chat(self, system, history, gen_conf, images=None, **kwargs):

|

||||

gen_conf = self._clean_conf(gen_conf)

|

||||

ans = ""

|

||||

try:

|

||||

response = self.client.messages.create(

|

||||

response = await self.async_client.messages.create(

|

||||

model=self.model_name,

|

||||

messages=self._form_history(system, history, images),

|

||||

system=system,

|

||||

stream=False,

|

||||

**gen_conf,

|

||||

).to_dict()

|

||||

)

|

||||

response = response.to_dict()

|

||||

ans = response["content"][0]["text"]

|

||||

if response["stop_reason"] == "max_tokens":

|

||||

ans += "...\nFor the content length reason, it stopped, continue?" if is_english([ans]) else "······\n由于长度的原因,回答被截断了,要继续吗?"

|

||||

|

|

@ -1033,11 +1046,11 @@ class AnthropicCV(Base):

|

|||

except Exception as e:

|

||||

return ans + "\n**ERROR**: " + str(e), 0

|

||||

|

||||

def chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

async def async_chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

gen_conf = self._clean_conf(gen_conf)

|

||||

total_tokens = 0

|

||||

try:

|

||||

response = self.client.messages.create(

|

||||

response = self.async_client.messages.create(

|

||||

model=self.model_name,

|

||||

messages=self._form_history(system, history, images),

|

||||

system=system,

|

||||

|

|

@ -1045,7 +1058,7 @@ class AnthropicCV(Base):

|

|||

**gen_conf,

|

||||

)

|

||||

think = False

|

||||

for res in response:

|

||||

async for res in response:

|

||||

if res.type == "content_block_delta":

|

||||

if res.delta.type == "thinking_delta" and res.delta.thinking:

|

||||

if not think:

|

||||

|

|

@ -1117,18 +1130,18 @@ class GoogleCV(AnthropicCV, GeminiCV):

|

|||

else:

|

||||

return GeminiCV.describe_with_prompt(self, image, prompt)

|

||||

|

||||

def chat(self, system, history, gen_conf, images=None, **kwargs):

|

||||

async def async_chat(self, system, history, gen_conf, images=None, **kwargs):

|

||||

if "claude" in self.model_name:

|

||||

return AnthropicCV.chat(self, system, history, gen_conf, images)

|

||||

return await AnthropicCV.async_chat(self, system, history, gen_conf, images)

|

||||

else:

|

||||

return GeminiCV.chat(self, system, history, gen_conf, images)

|

||||

return await GeminiCV.async_chat(self, system, history, gen_conf, images)

|

||||

|

||||

def chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

async def async_chat_streamly(self, system, history, gen_conf, images=None, **kwargs):

|

||||

if "claude" in self.model_name:

|

||||

for ans in AnthropicCV.chat_streamly(self, system, history, gen_conf, images):

|

||||

async for ans in AnthropicCV.async_chat_streamly(self, system, history, gen_conf, images):

|

||||

yield ans

|

||||

else:

|

||||

for ans in GeminiCV.chat_streamly(self, system, history, gen_conf, images):

|

||||

async for ans in GeminiCV.async_chat_streamly(self, system, history, gen_conf, images):

|

||||

yield ans

|

||||

|

||||

|

||||

|

|

|

|||

|

|

@ -91,7 +91,7 @@ class Dealer:

|

|||

["docnm_kwd", "content_ltks", "kb_id", "img_id", "title_tks", "important_kwd", "position_int",

|

||||

"doc_id", "page_num_int", "top_int", "create_timestamp_flt", "knowledge_graph_kwd",

|

||||

"question_kwd", "question_tks", "doc_type_kwd",

|

||||

"available_int", "content_with_weight", PAGERANK_FLD, TAG_FLD])

|

||||

"available_int", "content_with_weight", "mom_id", PAGERANK_FLD, TAG_FLD])

|

||||

kwds = set([])

|

||||

|

||||

qst = req.get("question", "")

|

||||

|

|

@ -469,6 +469,7 @@ class Dealer:

|

|||

"vector": chunk.get(vector_column, zero_vector),

|

||||

"positions": position_int,

|

||||

"doc_type_kwd": chunk.get("doc_type_kwd", ""),

|

||||

"mom_id": chunk.get("mom_id", ""),

|

||||

}

|

||||

if highlight and sres.highlight:

|

||||

if id in sres.highlight:

|

||||

|

|

@ -650,7 +651,8 @@ class Dealer:

|

|||

i = 0

|

||||

while i < len(chunks):

|

||||

ck = chunks[i]

|

||||

if not ck.get("mom_id"):

|

||||

mom_id = ck.get("mom_id")

|

||||

if not isinstance(mom_id, str) or not mom_id.strip():

|

||||

i += 1

|

||||

continue

|

||||

mom_chunks[ck["mom_id"]].append(chunks.pop(i))

|

||||

|

|

|

|||

|

|

@ -781,7 +781,10 @@ async def run_toc_from_text(chunks, chat_mdl, callback=None):

|

|||

|

||||

# Merge structure and content (by index)

|

||||

prune = len(toc_with_levels) > 512

|

||||

max_lvl = sorted([t.get("level", "0") for t in toc_with_levels if isinstance(t, dict)])[-1]

|

||||

max_lvl = "0"

|

||||

sorted_list = sorted([t.get("level", "0") for t in toc_with_levels if isinstance(t, dict)])

|

||||

if sorted_list:

|

||||

max_lvl = sorted_list[-1]

|

||||

merged = []

|

||||

for _ , (toc_item, src_item) in enumerate(zip(toc_with_levels, filtered)):

|

||||

if prune and toc_item.get("level", "0") >= max_lvl:

|

||||

|

|

|

|||

|

|

@ -727,17 +727,17 @@ async def insert_es(task_id, task_tenant_id, task_dataset_id, chunks, progress_c

|

|||

if not mom:

|

||||

continue

|

||||

id = xxhash.xxh64(mom.encode("utf-8")).hexdigest()

|

||||

ck["mom_id"] = id

|

||||

if id in mother_ids:

|

||||

continue

|

||||

mother_ids.add(id)

|

||||

ck["mom_id"] = id

|

||||

mom_ck = copy.deepcopy(ck)

|

||||

mom_ck["id"] = id

|

||||

mom_ck["content_with_weight"] = mom

|

||||

mom_ck["available_int"] = 0

|

||||

flds = list(mom_ck.keys())

|

||||

for fld in flds:

|

||||

if fld not in ["id", "content_with_weight", "doc_id", "kb_id", "available_int", "position_int"]:

|

||||

if fld not in ["id", "content_with_weight", "doc_id", "docnm_kwd", "kb_id", "available_int", "position_int"]:

|

||||

del mom_ck[fld]

|

||||

mothers.append(mom_ck)

|

||||

|

||||

|

|

|

|||

|

|

@ -244,7 +244,7 @@ export interface JsonEditorOptions {

|

|||

timestampFormat?: string;