- Made is_available() abstract in base.py with proper implementation in providers

- Added original_error parameter to UnsupportedLanguageError and TranslationConfigError

- Added Field validation for confidence_threshold bounds (0.0-1.0)

- Changed @lru_cache to @lru_cache() for explicit style

- Added get_translation_provider to __all__ in providers/__init__.py

- Replaced deprecated asyncio.get_event_loop() with get_running_loop()

- Added debug logging to is_available() in GoogleTranslationProvider

- Added TODO comment for confidence score improvement in OpenAIProvider

- Added None check for read_query_prompt() with fallback default prompt

- Moved ClientSession outside batch loop in AzureTranslationProvider

- Fixed Optional[float] type annotation in detect_language()

- Added Note section documenting in-place mutation in translate_content()

- Added test_confidence_threshold_validation() for bounds testing

- Added descriptive assertion messages to config tests

- Converted all async tests to use @pytest.mark.asyncio decorators

- Replaced manual skip checks with @pytest.mark.skipif

- Removed manual main() blocks, tests now pytest-only

- Changed Chinese language assertion to use startswith('zh') for flexibility

|

||

|---|---|---|

| .github | ||

| alembic | ||

| assets | ||

| bin | ||

| cognee | ||

| cognee-frontend | ||

| cognee-mcp | ||

| cognee-starter-kit | ||

| deployment | ||

| distributed | ||

| evals | ||

| examples | ||

| licenses | ||

| logs | ||

| new-examples | ||

| notebooks | ||

| tools | ||

| working_dir_error_replication | ||

| .coderabbit.yaml | ||

| .dockerignore | ||

| .env.template | ||

| .gitattributes | ||

| .gitguardian.yml | ||

| .gitignore | ||

| .mergify.yml | ||

| .pre-commit-config.yaml | ||

| .pylintrc | ||

| AGENTS.md | ||

| alembic.ini | ||

| CODE_OF_CONDUCT.md | ||

| CONTRIBUTING.md | ||

| CONTRIBUTORS.md | ||

| DCO.md | ||

| docker-compose.yml | ||

| Dockerfile | ||

| entrypoint.sh | ||

| LICENSE | ||

| mypy.ini | ||

| NOTICE.md | ||

| poetry.lock | ||

| pyproject.toml | ||

| README.md | ||

| SECURITY.md | ||

| uv.lock | ||

Cognee - Accurate and Persistent AI Memory

Demo . Docs . Learn More · Join Discord · Join r/AIMemory . Community Plugins & Add-ons

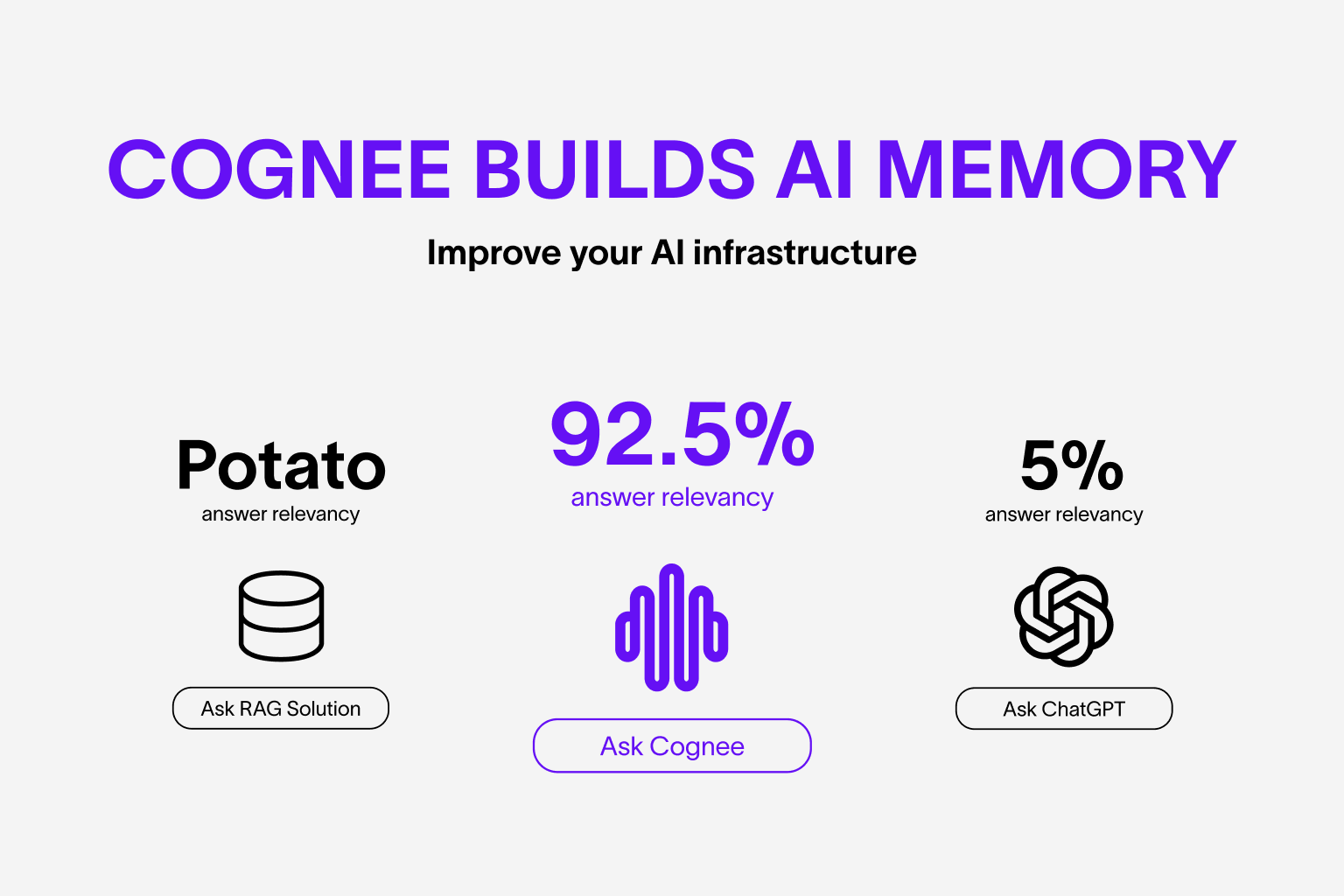

Use your data to build personalized and dynamic memory for AI Agents. Cognee lets you replace RAG with scalable and modular ECL (Extract, Cognify, Load) pipelines.

🌐 Available Languages : Deutsch | Español | Français | 日本語 | 한국어 | Português | Русский | 中文

About Cognee

Cognee is an open-source tool and platform that transforms your raw data into persistent and dynamic AI memory for Agents. It combines vector search with graph databases to make your documents both searchable by meaning and connected by relationships.

You can use Cognee in two ways:

- Self-host Cognee Open Source, which stores all data locally by default.

- Connect to Cognee Cloud, and get the same OSS stack on managed infrastructure for easier development and productionization.

Cognee Open Source (self-hosted):

- Interconnects any type of data — including past conversations, files, images, and audio transcriptions

- Replaces traditional RAG systems with a unified memory layer built on graphs and vectors

- Reduces developer effort and infrastructure cost while improving quality and precision

- Provides Pythonic data pipelines for ingestion from 30+ data sources

- Offers high customizability through user-defined tasks, modular pipelines, and built-in search endpoints

Cognee Cloud (managed):

- Hosted web UI dashboard

- Automatic version updates

- Resource usage analytics

- GDPR compliant, enterprise-grade security

Basic Usage & Feature Guide

To learn more, check out this short, end-to-end Colab walkthrough of Cognee's core features.

Quickstart

Let’s try Cognee in just a few lines of code. For detailed setup and configuration, see the Cognee Docs.

Prerequisites

- Python 3.10 to 3.13

Step 1: Install Cognee

You can install Cognee with pip, poetry, uv, or your preferred Python package manager.

uv pip install cognee

Step 2: Configure the LLM

import os

os.environ["LLM_API_KEY"] = "YOUR OPENAI_API_KEY"

Alternatively, create a .env file using our template.

To integrate other LLM providers, see our LLM Provider Documentation.

Step 3: Run the Pipeline

Cognee will take your documents, generate a knowledge graph from them and then query the graph based on combined relationships.

Now, run a minimal pipeline:

import cognee

import asyncio

async def main():

# Add text to cognee

await cognee.add("Cognee turns documents into AI memory.")

# Generate the knowledge graph

await cognee.cognify()

# Add memory algorithms to the graph

await cognee.memify()

# Query the knowledge graph

results = await cognee.search("What does Cognee do?")

# Display the results

for result in results:

print(result)

if __name__ == '__main__':

asyncio.run(main())

As you can see, the output is generated from the document we previously stored in Cognee:

Cognee turns documents into AI memory.

Use the Cognee CLI

As an alternative, you can get started with these essential commands:

cognee-cli add "Cognee turns documents into AI memory."

cognee-cli cognify

cognee-cli search "What does Cognee do?"

cognee-cli delete --all

To open the local UI, run:

cognee-cli -ui

Demos & Examples

See Cognee in action:

Persistent Agent Memory

Cognee Memory for LangGraph Agents

Simple GraphRAG

Cognee with Ollama

Community & Support

Contributing

We welcome contributions from the community! Your input helps make Cognee better for everyone. See CONTRIBUTING.md to get started.

Code of Conduct

We're committed to fostering an inclusive and respectful community. Read our Code of Conduct for guidelines.

Research & Citation

We recently published a research paper on optimizing knowledge graphs for LLM reasoning:

@misc{markovic2025optimizinginterfaceknowledgegraphs,

title={Optimizing the Interface Between Knowledge Graphs and LLMs for Complex Reasoning},

author={Vasilije Markovic and Lazar Obradovic and Laszlo Hajdu and Jovan Pavlovic},

year={2025},

eprint={2505.24478},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2505.24478},

}