<!-- .github/pull_request_template.md --> ## Description <!-- Please provide a clear, human-generated description of the changes in this PR. DO NOT use AI-generated descriptions. We want to understand your thought process and reasoning. --> Until now, limit=0 in vector search meant that there is no limit and we should return everything. This caused confusion and errors, so now it is reworked so that limit=None means no limit on the search. If someone puts limit=0, there will be no results returned, as it makes more sense and is less error prone. ## Type of Change <!-- Please check the relevant option --> - [ ] Bug fix (non-breaking change that fixes an issue) - [ ] New feature (non-breaking change that adds functionality) - [ ] Breaking change (fix or feature that would cause existing functionality to change) - [ ] Documentation update - [x] Code refactoring - [ ] Performance improvement - [ ] Other (please specify): ## Changes Made <!-- List the specific changes made in this PR --> - - - ## Testing <!-- Describe how you tested your changes --> ## Screenshots/Videos (if applicable) <!-- Add screenshots or videos to help explain your changes --> ## Pre-submission Checklist <!-- Please check all boxes that apply before submitting your PR --> - [x] **I have tested my changes thoroughly before submitting this PR** - [x] **This PR contains minimal changes necessary to address the issue/feature** - [x] My code follows the project's coding standards and style guidelines - [x] I have added tests that prove my fix is effective or that my feature works - [ ] I have added necessary documentation (if applicable) - [ ] All new and existing tests pass - [ ] I have searched existing PRs to ensure this change hasn't been submitted already - [ ] I have linked any relevant issues in the description - [ ] My commits have clear and descriptive messages ## Related Issues <!-- Link any related issues using "Fixes #issue_number" or "Relates to #issue_number" --> ## Additional Notes <!-- Add any additional notes, concerns, or context for reviewers --> ## DCO Affirmation I affirm that all code in every commit of this pull request conforms to the terms of the Topoteretes Developer Certificate of Origin. |

||

|---|---|---|

| .github | ||

| alembic | ||

| assets | ||

| bin | ||

| cognee | ||

| cognee-frontend | ||

| cognee-mcp | ||

| cognee-starter-kit | ||

| deployment | ||

| distributed | ||

| evals | ||

| examples | ||

| licenses | ||

| logs | ||

| notebooks | ||

| tools | ||

| .dockerignore | ||

| .env.template | ||

| .gitattributes | ||

| .gitguardian.yml | ||

| .gitignore | ||

| .pre-commit-config.yaml | ||

| .pylintrc | ||

| alembic.ini | ||

| CODE_OF_CONDUCT.md | ||

| CONTRIBUTING.md | ||

| CONTRIBUTORS.md | ||

| DCO.md | ||

| docker-compose.yml | ||

| Dockerfile | ||

| entrypoint.sh | ||

| LICENSE | ||

| mypy.ini | ||

| NOTICE.md | ||

| poetry.lock | ||

| pyproject.toml | ||

| README.md | ||

| SECURITY.md | ||

| uv.lock | ||

cognee - Memory for AI Agents in 6 lines of code

Demo . Learn more · Join Discord · Join r/AIMemory . Docs . cognee community repo

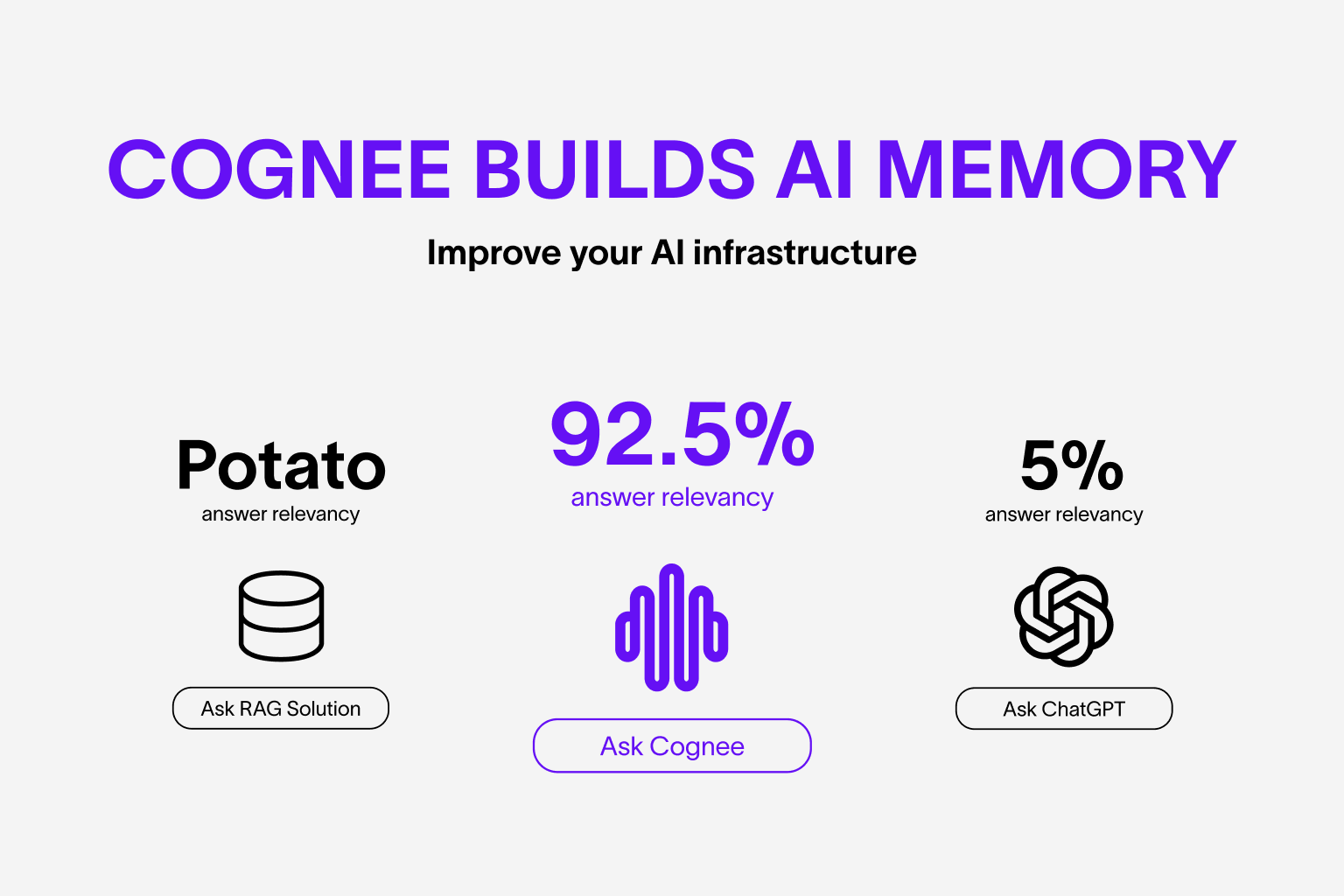

Build dynamic memory for Agents and replace RAG using scalable, modular ECL (Extract, Cognify, Load) pipelines.

🌐 Available Languages : Deutsch | Español | français | 日本語 | 한국어 | Português | Русский | 中文

Features

- Interconnect and retrieve your past conversations, documents, images and audio transcriptions

- Replaces RAG systems and reduces developer effort, and cost.

- Load data to graph and vector databases using only Pydantic

- Manipulate your data while ingesting from 30+ data sources

Get Started

Get started quickly with a Google Colab notebook , Deepnote notebook or starter repo

Using cognee

Self-hosted package:

- Get self-serve UI with embedded Python notebooks

- Add custom tasks and pipelines via Python SDK

- Get Docker images and MCP servers you can deploy

- Use distributed cognee SDK to process a TBs of your data

- Use community adapters to connect to Redis, Azure, Falkor and others

Hosted platform:

- Sync your local data to our hosted solution

- Get a secure API endpoint

- We manage the UI for you

Self-Hosted (Open Source)

📦 Installation

You can install Cognee using either pip, poetry, uv or any other python package manager.

Cognee supports Python 3.10 to 3.12

With uv

uv pip install cognee

Detailed instructions can be found in our docs

💻 Basic Usage

Setup

import os

os.environ["LLM_API_KEY"] = "YOUR OPENAI_API_KEY"

You can also set the variables by creating .env file, using our template. To use different LLM providers, for more info check out our documentation

Simple example

Python

This script will run the default pipeline:

import cognee

import asyncio

async def main():

# Add text to cognee

await cognee.add("Cognee turns documents into AI memory.")

# Generate the knowledge graph

await cognee.cognify()

# Add memory algorithms to the graph

await cognee.memify()

# Query the knowledge graph

results = await cognee.search("What does cognee do?")

# Display the results

for result in results:

print(result)

if __name__ == '__main__':

asyncio.run(main())

Example output:

Cognee turns documents into AI memory.

Via CLI

Let's get the basics covered

cognee-cli add "Cognee turns documents into AI memory."

cognee-cli cognify

cognee-cli search "What does cognee do?"

cognee-cli delete --all

or run

cognee-cli -ui

Hosted Platform

Get up and running in minutes with automatic updates, analytics, and enterprise security.

- Sign up on cogwit

- Add your API key to local UI and sync your data to Cogwit

Demos

- Cogwit Beta demo:

- Simple GraphRAG demo

- cognee with Ollama

Contributing

Your contributions are at the core of making this a true open source project. Any contributions you make are greatly appreciated. See CONTRIBUTING.md for more information.

Code of Conduct

We are committed to making open source an enjoyable and respectful experience for our community. See CODE_OF_CONDUCT for more information.

Citation

We now have a paper you can cite:

@misc{markovic2025optimizinginterfaceknowledgegraphs,

title={Optimizing the Interface Between Knowledge Graphs and LLMs for Complex Reasoning},

author={Vasilije Markovic and Lazar Obradovic and Laszlo Hajdu and Jovan Pavlovic},

year={2025},

eprint={2505.24478},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2505.24478},

}