-

-  -

-  -

-  -

-

-

-  -

-  -

-  -

-

-

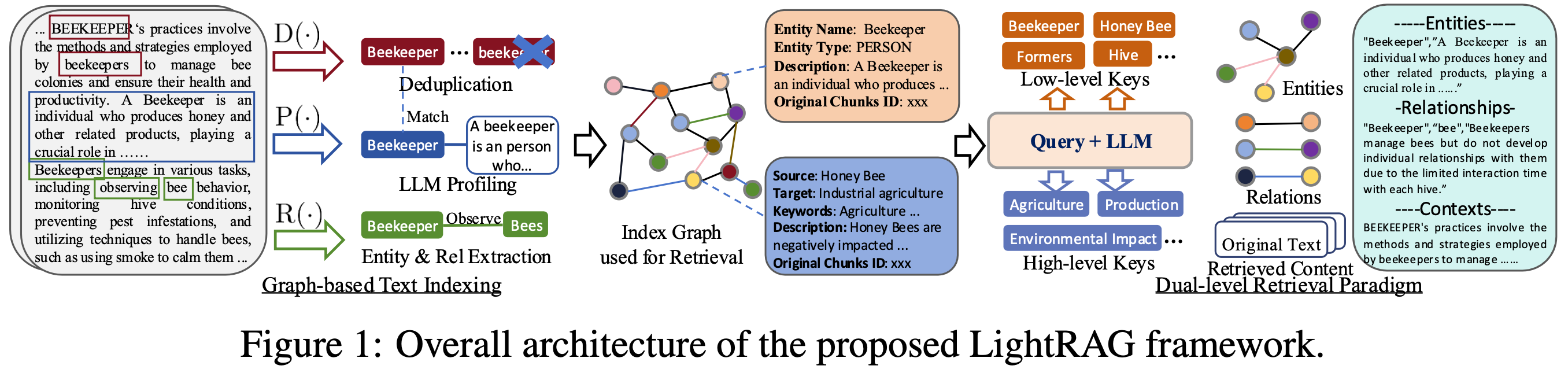

-This repository hosts the code of LightRAG. The structure of this code is based on [nano-graphrag](https://github.com/gusye1234/nano-graphrag).

-

-